Technology

AI suggested 40,000 new possible chemical weapons in just six hours

It took lower than six hours for drug-developing AI to invent 40,000 probably deadly molecules. Researchers put AI usually used to seek for useful medication right into a type of “dangerous actor” mode to indicate how simply it could possibly be abused at a organic arms management convention.

All of the researchers needed to do was tweak their methodology to hunt out, somewhat than weed out toxicity. The AI got here up with tens of 1000’s of recent substances, a few of that are just like VX, essentially the most potent nerve agent ever developed. Shaken, they revealed their findings this month within the journal Nature Machine Intelligence.

The paper had us at The Verge a bit shook, too. So, to determine how fearful we must be, The Verge spoke with Fabio Urbina, lead writer of the paper. He’s additionally a senior scientist at Collaborations Prescribed drugs, Inc., an organization that focuses on discovering drug remedies for uncommon illnesses.

This interview has been calmly edited for size and readability.

This paper appears to flip your regular work on its head. Inform me about what you do in your day-to-day job.

Primarily, my job is to implement new machine studying fashions within the space of drug discovery. A big fraction of those machine studying fashions that we use are supposed to predict toxicity. It doesn’t matter what type of drug you’re making an attempt to develop, it’s essential make it possible for they’re not going to be poisonous. If it seems that you’ve got this glorious drug that lowers blood strain fantastically, nevertheless it hits one in all these actually necessary, say, coronary heart channels — then mainly, it’s a no-go as a result of that’s simply too harmful.

So then, why did you do that examine on biochemical weapons? What was the spark?

We obtained an invitation to the Convergence convention by the Swiss Federal Institute for Nuclear, Organic and Chemical Safety, Spiez Laboratory. The thought of the convention is to tell the group at massive of recent developments with instruments which will have implications for the Chemical/Organic Weapons Conference.

We obtained this invite to speak about machine studying and the way it may be misused in our area. It’s one thing we by no means actually thought of earlier than. But it surely was simply very straightforward to comprehend that as we’re constructing these machine studying fashions to get higher and higher at predicting toxicity as a way to keep away from toxicity, all we’ve to do is type of flip the change round and say, “, as a substitute of going away from toxicity, what if we do go towards toxicity?”

Are you able to stroll me by way of how you probably did that — moved the mannequin to go towards toxicity?

I’ll be a bit imprecise with some particulars as a result of we had been informed mainly to withhold a number of the specifics. Broadly, the best way it really works for this experiment is that we’ve numerous datasets traditionally of molecules which have been examined to see whether or not they’re poisonous or not.

Specifically, the one which we concentrate on right here is VX. It’s an inhibitor of what’s often called acetylcholinesterase. Everytime you do something muscle-related, your neurons use acetylcholinesterase as a sign to mainly say “go transfer your muscle tissue.” The best way VX is deadly is it really stops your diaphragm, your lung muscle tissue, from having the ability to transfer so your lungs turn into paralyzed.

Clearly, that is one thing you need to keep away from. So traditionally, experiments have been finished with various kinds of molecules to see whether or not they inhibit acetylcholinesterase. And so, we constructed up these massive datasets of those molecular buildings and the way poisonous they’re.

We are able to use these datasets as a way to create a machine studying mannequin, which mainly learns what components of the molecular construction are necessary for toxicity and which aren’t. Then we may give this machine studying mannequin new molecules, probably new medication that possibly have by no means been examined earlier than. And it’ll inform us that is predicted to be poisonous, or that is predicted to not be poisonous. It is a method for us to just about display screen very, very quick numerous molecules and type of kick out ones which might be predicted to be poisonous. In our examine right here, what we did is we inverted that, clearly, and we use this mannequin to attempt to predict toxicity.

The opposite key a part of what we did listed below are these new generative fashions. We may give a generative mannequin a complete lot of various buildings, and it learns learn how to put molecules collectively. After which we will, in a way, ask it to generate new molecules. Now it may generate new molecules all around the area of chemistry, and so they’re simply type of random molecules. However one factor we will do is we will really inform the generative mannequin which path we need to go. We try this by giving it a bit scoring perform, which provides it a excessive rating if the molecules it generates are in direction of one thing we would like. As a substitute of giving a low rating to poisonous molecules, we give a excessive rating to poisonous molecules.

Now we see the mannequin begin producing all of those molecules, numerous which appear like VX and likewise like different chemical warfare brokers.

Inform me extra about what you discovered. Did something shock you?

We weren’t actually certain what we had been going to get. Our generative fashions are pretty new applied sciences. So we haven’t broadly used them quite a bit.

The most important factor that jumped out at first was that numerous the generated compounds had been predicted to be really extra poisonous than VX. And the rationale that’s stunning is as a result of VX is mainly one of the potent compounds identified. Which means you want a really, very, little or no quantity of it to be deadly.

Now, these are predictions that we haven’t verified, and we actually don’t need to confirm that ourselves. However the predictive fashions are typically fairly good. So even when there’s numerous false positives, we’re afraid that there are some stronger molecules in there.

Second, we really checked out numerous the buildings of those newly generated molecules. And numerous them did appear like VX and different warfare brokers, and we even discovered some that had been generated from the mannequin that had been precise chemical warfare brokers. These had been generated from the mannequin having by no means seen these chemical warfare brokers. So we knew we had been type of in the appropriate area right here and that it was producing molecules that made sense as a result of a few of them had already been made earlier than.

For me, the priority was simply how straightforward it was to do. Numerous the issues we used are on the market at no cost. You’ll be able to go and obtain a toxicity dataset from wherever. In case you have any person who is aware of learn how to code in Python and has some machine studying capabilities, then in in all probability a very good weekend of labor, they might construct one thing like this generative mannequin pushed by poisonous datasets. In order that was the factor that obtained us actually fascinated with placing this paper on the market; it was such a low barrier of entry for this kind of misuse.

Your paper says that by doing this work, you and your colleagues “have nonetheless crossed a grey ethical boundary, demonstrating that it’s attainable to design digital potential poisonous molecules with out a lot in the best way of effort, time or computational assets. We are able to simply erase the 1000’s of molecules we created, however we can not delete the data of learn how to recreate them.” What was operating by way of your head as you had been doing this work?

This was fairly an uncommon publication. We’ve been backwards and forwards a bit about whether or not we should always publish it or not. It is a potential misuse that didn’t take as a lot time to carry out. And we needed to get that data out since we actually didn’t see it wherever within the literature. We appeared round, and no person was actually speaking about it. However on the identical time, we didn’t need to give the concept to dangerous actors.

On the finish of the day, we determined that we type of need to get forward of this. As a result of if it’s attainable for us to do it, it’s seemingly that some adversarial agent someplace is possibly already fascinated with it or sooner or later goes to consider it. By then, our know-how could have progressed even past what we will do now. And numerous it’s simply going to be open supply — which I absolutely assist: the sharing of science, the sharing of information, the sharing of fashions. But it surely’s one in all this stuff the place we, as scientists, ought to take care that what we launch is completed responsibly.

How straightforward is it for somebody to copy what you probably did? What would they want?

I don’t need to sound very sensationalist about this, however it’s pretty straightforward for somebody to copy what we did.

Should you had been to Google generative fashions, you would discover quite a lot of put-together one-liner generative fashions that individuals have launched at no cost. After which, for those who had been to seek for toxicity datasets, there’s a lot of open-source tox datasets. So for those who simply mix these two issues, after which you understand how to code and construct machine studying fashions — all that requires actually is an web connection and a pc — then, you would simply replicate what we did. And never only for VX, however for just about no matter different open-source toxicity datasets exist.

In fact, it does require some experience. If any person had been to place this collectively with out understanding something about chemistry, they’d finally in all probability generate stuff that was not very helpful. And there’s nonetheless the subsequent step of getting to get these molecules synthesized. Discovering a possible drug or potential new poisonous molecule is one factor; the subsequent step of synthesis — really creating a brand new molecule in the actual world — can be one other barrier.

Proper, there’s nonetheless some large leaps between what the AI comes up with and turning that right into a real-world risk. What are the gaps there?

The large hole to begin with is that you simply actually don’t know if these molecules are literally poisonous or not. There’s going to be some quantity of false positives. If we’re strolling ourselves by way of what a nasty agent can be considering or doing, they must decide on which of those new molecules they’d need to synthesize finally.

So far as synthesis routes, this could possibly be a make it or break it. Should you discover one thing that appears like a chemical warfare agent and attempt to get that synthesized, likelihood is it’s not going to occur. Numerous the chemical constructing blocks of those chemical warfare brokers are well-known and are watched. They’re regulated. However there’s so many synthesis corporations. So long as it doesn’t appear like a chemical warfare agent, they’re probably going to only synthesize it and ship it proper again as a result of who is aware of what the molecule is getting used for, proper?

You get at this later within the paper, however what will be finished to forestall this sort of misuse of AI? What safeguards would you wish to see established?

For context, there are an increasing number of insurance policies about information sharing. And I utterly agree with it as a result of it opens up extra avenues for analysis. It permits different researchers to see your information and use it for their very own analysis. However on the identical time, that additionally consists of issues like toxicity datasets and toxicity fashions. So it’s a bit arduous to determine a very good answer for this drawback.

We appeared over in direction of Silicon Valley: there’s a bunch referred to as OpenAI; they launched a top-of-the-line language mannequin referred to as GPT-3. It’s nearly like a chatbot; it mainly can generate sentences and textual content that’s nearly indistinguishable from people. They really allow you to use it at no cost everytime you need, however it’s a must to get a particular entry token from them to take action. At any level, they might lower off your entry from these fashions. We had been considering one thing like that could possibly be a helpful start line for probably delicate fashions, corresponding to toxicity fashions.

Science is all about open communication, open entry, open information sharing. Restrictions are antithetical to that notion. However a step going ahead could possibly be to no less than responsibly account for who’s utilizing your assets.

Your paper additionally says that “[w]ithout being overly alarmist, this could function a wake-up name for our colleagues” — what’s it that you really want your colleagues to get up to? And what do you assume that being overly alarmist would appear like?

We simply need extra researchers to acknowledge and concentrate on potential misuse. Once you begin working within the chemistry area, you do get knowledgeable about misuse of chemistry, and also you’re type of chargeable for ensuring you keep away from that as a lot as attainable. In machine studying, there’s nothing of the type. There’s no steering on misuse of the know-how.

So placing that consciousness on the market might assist individuals actually be aware of the problem. Then it’s no less than talked about in broader circles and might no less than be one thing that we be careful for as we get higher and higher at constructing toxicity fashions.

I don’t need to suggest that machine studying AI goes to begin creating poisonous molecules and there’s going to be a slew of recent biochemical warfare brokers simply across the nook. That any person clicks a button after which, you understand, chemical warfare brokers simply type of seem of their hand.

I don’t need to be alarmist in saying that there’s going to be AI-driven chemical warfare. I don’t assume that’s the case now. I don’t assume it’s going to be the case anytime quickly. But it surely’s one thing that’s beginning to turn into a chance.

Technology

Apple’s next AirTag could arrive in 2025

/cdn.vox-cdn.com/uploads/chorus_asset/file/22461385/vpavic_4547_20210421_0067.jpg)

You may not have even thought about replacing your AirTag yet, but Bloomberg reports that Apple is working on a new one that could arrive in mid-2025. The new AirTag will reportedly feature an updated chip with better location tracking — an improvement it might need as competition among tracking devices ramps up.

By the time Apple rolls out its refreshed AirTag, the Bluetooth tracking landscape will look a lot different on both Android and iOS. Last month, Google revealed its new Find My Device network, which lets users locate their phones using signals from nearby Android devices. Even Life360, the safety service company that owns Tile, is creating its own location-tracking network that uses satellites to locate its Bluetooth tags.

In last week’s iOS 17.5 update, Apple finally started letting iPhones show unwanted tracking alerts for third-party Bluetooth tags. If an unknown AirTag or other third-party tracker is found with an iPhone user, they’ll get an alert and can play a sound to locate it. The feature is part of an industry specification created to prevent stalking across iPhones and Android devices. Several companies that make Bluetooth tracking devices, including Chipolo, Pebblebee, and Eufy are on board with the new standard.

Technology

How to connect your AirPods to your iPhone, iPad the easy way

While the sonic quality of the AirPods certainly works across most devices with a Bluetooth connection, the real magic and a plethora of useful features really shine when you connect AirPods with other Apple devices. If you use the same Apple ID across all your Apple devices, you can really take advantage of the seamless auto-connect features.

GET SECURITY ALERTS, EXPERT TIPS – SIGN UP FOR KURT’S NEWSLETTER – THE CYBERGUY REPORT HERE

A woman listening to her AirPods (Kurt “CyberGuy” Knutsson)

ASK ANY TECH QUESTION AND GET KURT’S FREE CYBERGUY REPORT NEWSLETTER HERE

How to connect your AirPods to your iPhone

Before you start, make sure you’ve installed the latest version of iOS on your iPhone and be sure your AirPods are charged and in their case. If you’ve already connected your AirPods to your iPhone, it should connect automatically if you are signed in with the same Apple ID you used to sign onto your Mac. If not, here’s how to connect them to your iPhone.

- Unlock your iPhone and go to Settings

- Scroll down and tap Bluetooth, then turn on Bluetooth (if it isn’t already on)

- The toggle next to Bluetooth should be green, not grayed out.

Steps to connect your AirPods to your iPhone (Kurt “CyberGuy” Knutsson)

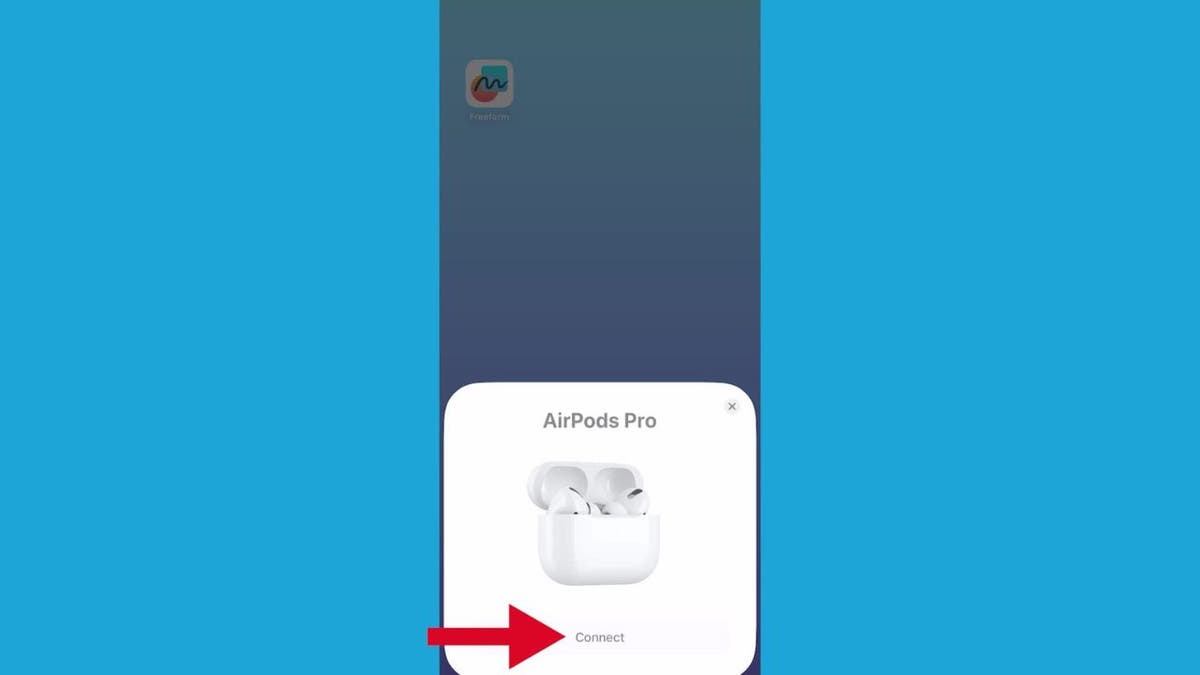

- Now, grab your AirPods case with the AirPods inside, then hold it next to your iPhone with the case top open.

Steps to connect your AirPods to your iPhone (Kurt “CyberGuy” Knutsson)

- At this point, a setup animation will show up on your iPhone screen.

- Tap Connect and you should be ready to listen.

Steps to connect your AirPods to your iPhone (Kurt “CyberGuy” Knutsson)

MORE: 8 INCREDIBLY USEFUL THINGS YOU CAN DO WITH AIRPODS

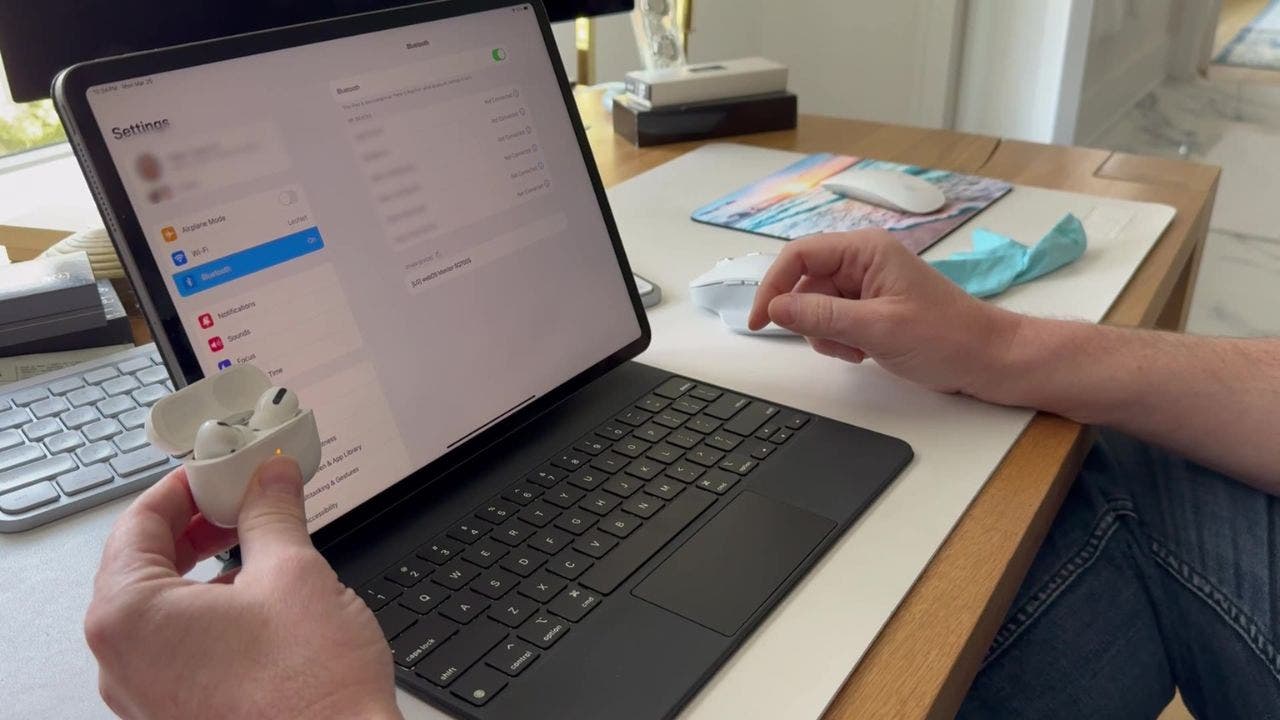

How to connect your AirPods to your iPad

Before you start, make sure you’ve installed the latest version of iOS on your iPhone and be sure your AirPods are charged and in their case. If you’ve connected your AirPods to your iPad already, it should connect automatically if you are signed in with the same Apple ID you used to sign onto your Mac. If not, here’s how to connect them to your iPad.

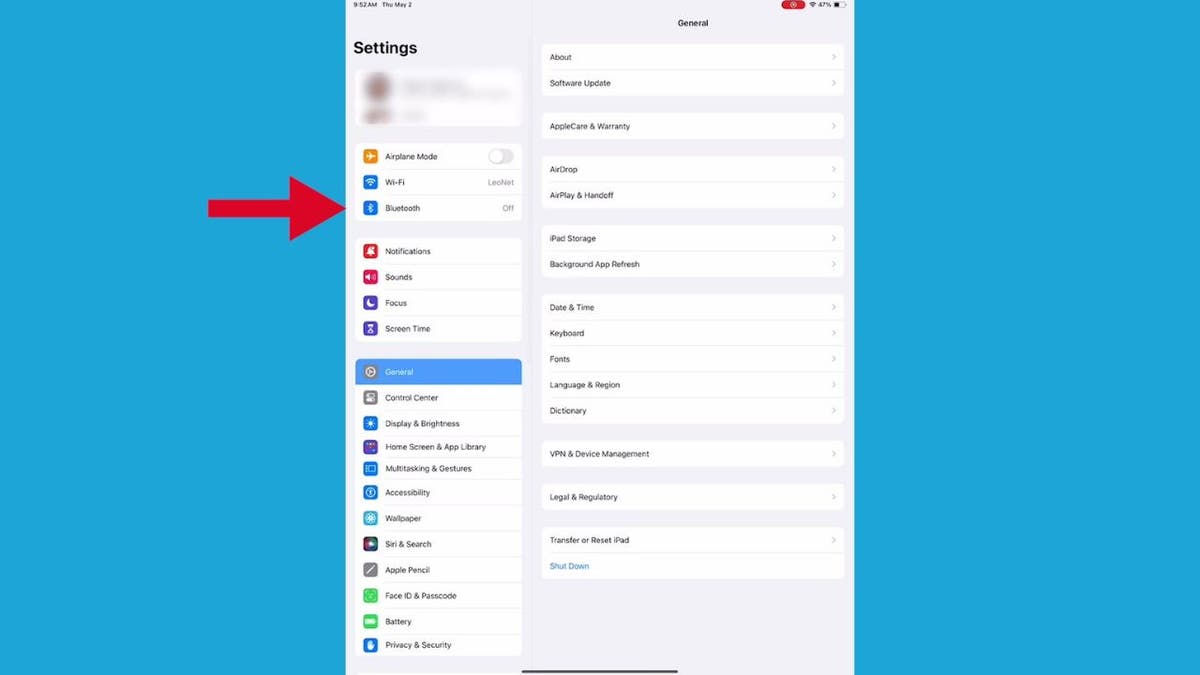

- Open up your iPad and go to Settings.

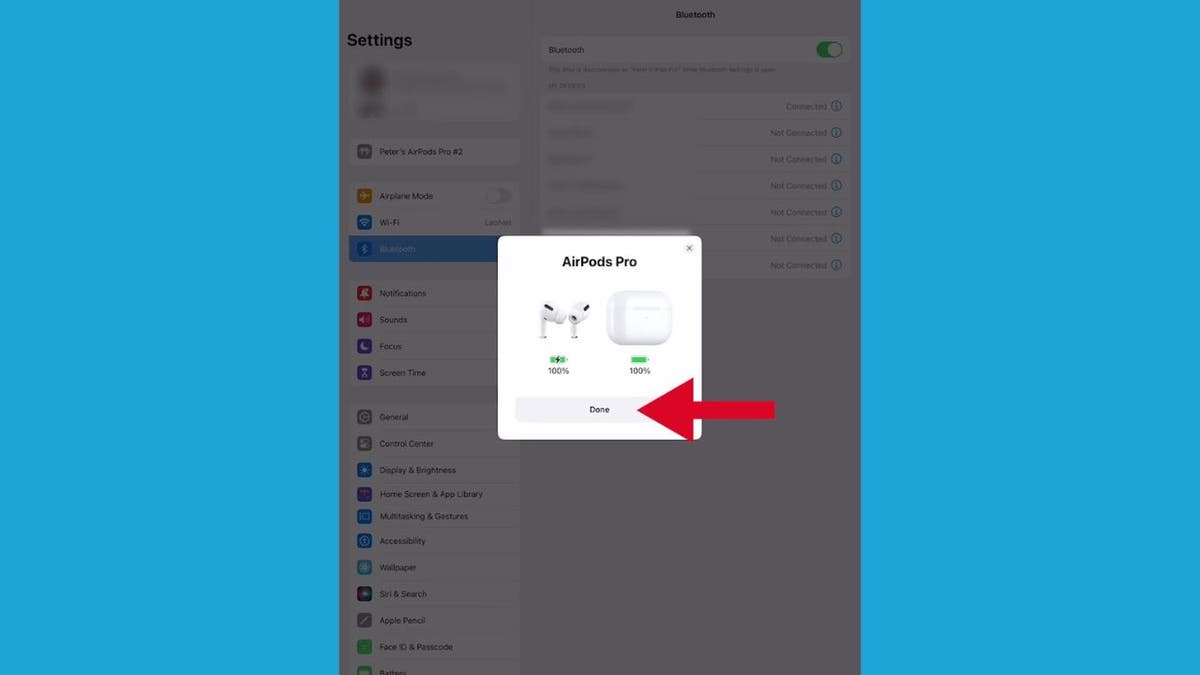

Steps to connect your AirPods to your iPad (Kurt “CyberGuy” Knutsson)

- From Settings, scroll down and tap Bluetooth.

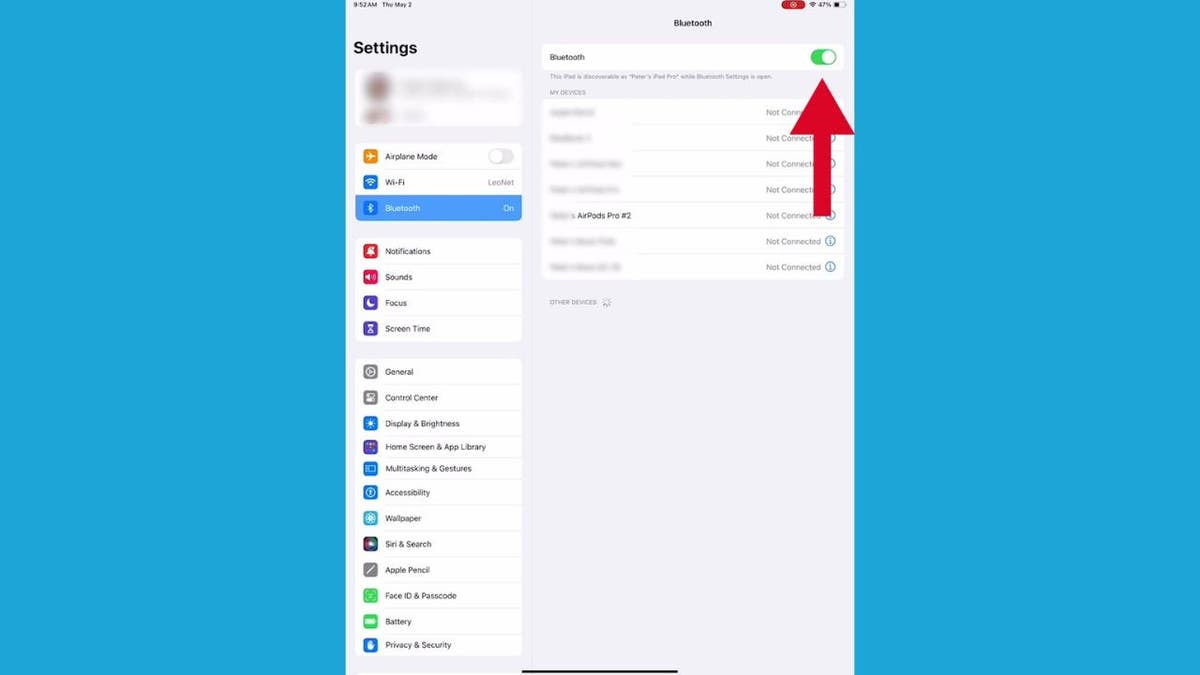

Steps to connect your AirPods to your iPad (Kurt “CyberGuy” Knutsson)

- Then, tap the button on the right once so that it turns green.

Steps to connect your AirPods to your iPad (Kurt “CyberGuy” Knutsson)

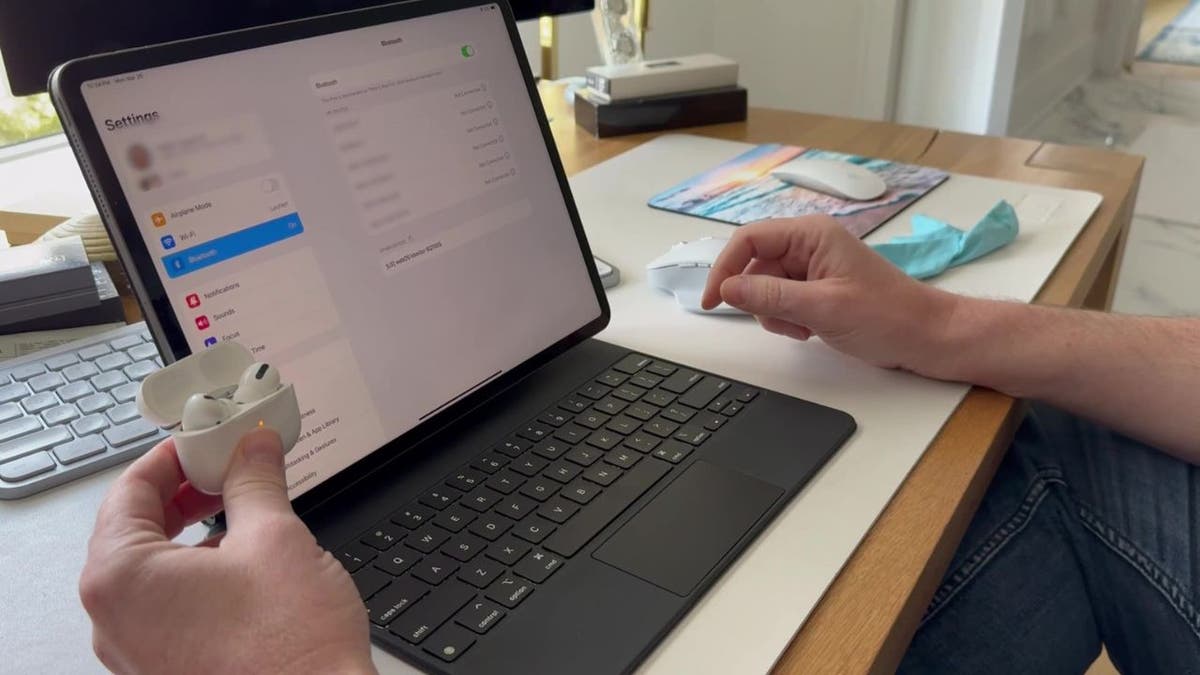

- Keep your iPad open to this screen and take out your AirPods.

Steps to connect your AirPods to your iPad (Kurt “CyberGuy” Knutsson)

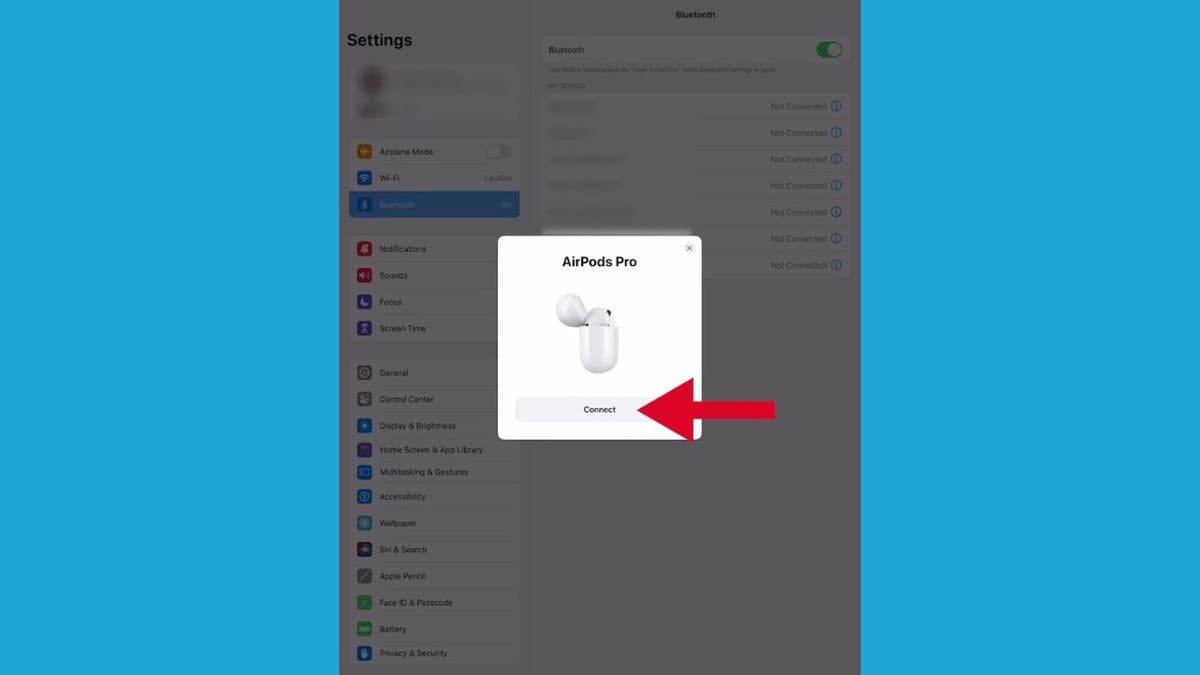

- From here, a setup animation will appear on the iPad. Tap Connect.

Steps to connect your AirPods to your iPad (Kurt “CyberGuy” Knutsson)

Steps to connect your AirPods to your iPad (Kurt “CyberGuy” Knutsson)

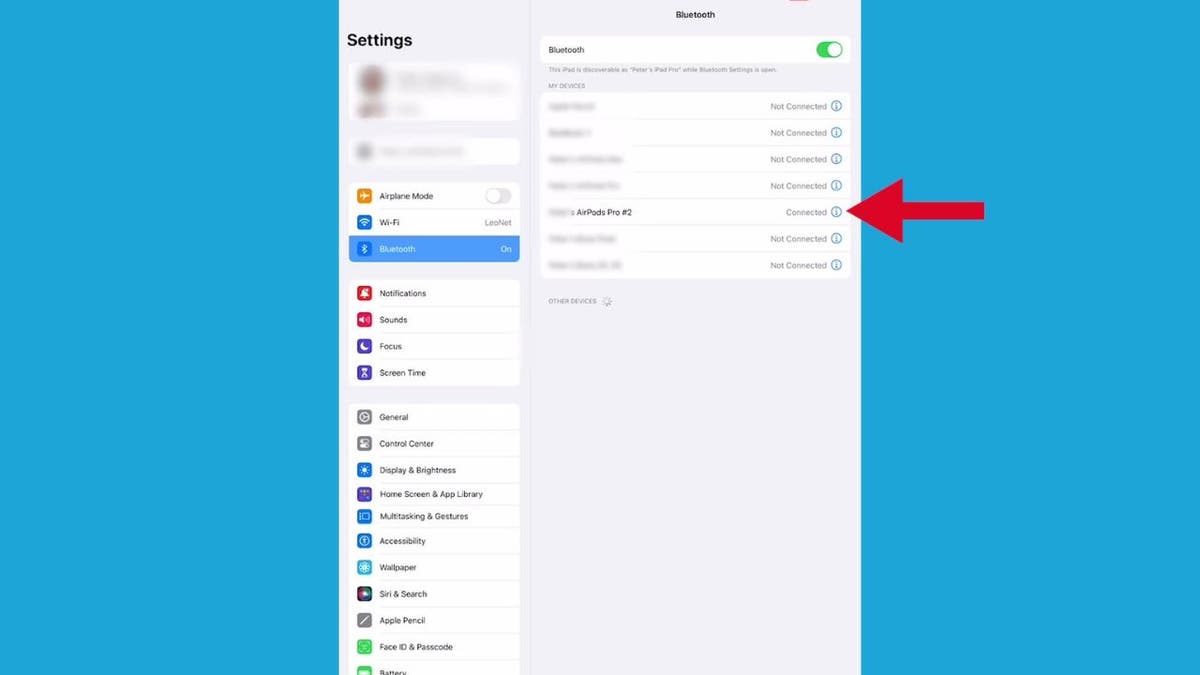

- Your AirPods should appear under the list of available devices in your Bluetooth settings on the iPad. Now tap your AirPods, and there you go.

Steps to connect your AirPods to your iPad (Kurt “CyberGuy” Knutsson)

Kurt’s key takeaways

In a nutshell, AirPods are popular because they’ve got great performance, reliability and are easy to use, especially if you’ve already got other Apple products in your life. They just get you, you know? They move between your iPad to your iPhone without a hitch – it’s like they’ve got a mind of their own. And setting them up is super simple. It’s like tap, tap, boom – you’re connected.

In what ways do you think the AirPods’ features could be further enhanced when paired with Apple devices? Let us know by writing us at Cyberguy.com/Contact.

For more of my tech tips and security alerts, subscribe to my free CyberGuy Report Newsletter by heading to Cyberguy.com/Newsletter.

Ask Kurt a question or let us know what stories you’d like us to cover.

Follow Kurt on Facebook, YouTube and Instagram.

Answers to the most asked CyberGuy questions:

Copyright 2024 CyberGuy.com. All rights reserved.

Technology

Two students find security bug that could let millions do laundry for free

/cdn.vox-cdn.com/uploads/chorus_asset/file/23249791/VRG_ILLO_STK001_carlo_cadenas_cybersecurity_virus.jpg)

A security lapse could let millions of college students do free laundry, thanks to one company. That’s because of a vulnerability that two University of California, Santa Cruz students found in internet-connected washing machines in commercial use in several countries, according to TechCrunch.

The two students, Alexander Sherbrooke and Iakov Taranenko, apparently exploited an API for the machines’ app to do things like remotely command them to work without payment and update a laundry account to show it had millions of dollars in it. The company that owns the machines, CSC ServiceWorks, claims to have more than a million laundry and vending machines in service at colleges, multi-housing communities, laundromats, and more in the US, Canada, and Europe.

CSC never responded when Sherbrooke and Taranenko reported the vulnerability via emails and a phone call in January, TechCrunch writes. Despite that, the students told the outlet that the company “quietly wiped out” their false millions after they contacted it.

The lack of response led them to tell others about their findings. That includes that the company has a published list of commands, which the two told TechCrunch enables connecting to all of CSC’s network-connected laundry machines. CSC ServiceWorks didn’t immediately respond to The Verge’s request for comment.

CSC’s vulnerability is a good reminder that the security situation with the internet of things still isn’t sorted out. For the exploit the students found, maybe CSC shoulders the risk, but in other cases, lax cybersecurity practices have made it possible for hackers or company contractors to view strangers’ security camera footage or gain access to smart plugs.

Often, security researchers find these security holes and report them before they can be exploited in the wild. But that’s not helpful if the company responsible for them doesn’t respond.

-

News1 week ago

News1 week agoSkeletal remains found almost 40 years ago identified as woman who disappeared in 1968

-

World1 week ago

World1 week agoIndia Lok Sabha election 2024 Phase 4: Who votes and what’s at stake?

-

World1 week ago

World1 week agoUkraine’s military chief admits ‘difficult situation’ in Kharkiv region

-

Movie Reviews1 week ago

Movie Reviews1 week ago“Kingdom of the Planet of the Apes”: Disney's New Kingdom is Far From Magical (Movie Review)

-

Politics1 week ago

Politics1 week agoTales from the trail: The blue states Trump eyes to turn red in November

-

World1 week ago

World1 week agoBorrell: Spain, Ireland and others could recognise Palestine on 21 May

-

World1 week ago

World1 week agoCatalans vote in crucial regional election for the separatist movement

-

Politics1 week ago

Politics1 week agoNorth Dakota gov, former presidential candidate Doug Burgum front and center at Trump New Jersey rally