Science

When A.I.’s Output Is a Threat to A.I. Itself

The internet is becoming awash in words and images generated by artificial intelligence.

Sam Altman, OpenAI’s chief executive, wrote in February that the company generated about 100 billion words per day — a million novels’ worth of text, every day, an unknown share of which finds its way onto the internet.

A.I.-generated text may show up as a restaurant review, a dating profile or a social media post. And it may show up as a news article, too: NewsGuard, a group that tracks online misinformation, recently identified over a thousand websites that churn out error-prone A.I.-generated news articles.

In reality, with no foolproof methods to detect this kind of content, much will simply remain undetected.

All this A.I.-generated information can make it harder for us to know what’s real. And it also poses a problem for A.I. companies. As they trawl the web for new data to train their next models on — an increasingly challenging task — they’re likely to ingest some of their own A.I.-generated content, creating an unintentional feedback loop in which what was once the output from one A.I. becomes the input for another.

In the long run, this cycle may pose a threat to A.I. itself. Research has shown that when generative A.I. is trained on a lot of its own output, it can get a lot worse.

Here’s a simple illustration of what happens when an A.I. system is trained on its own output, over and over again:

This is part of a data set of 60,000 handwritten digits.

When we trained an A.I. to mimic those digits, its output looked like this.

This new set was made by an A.I. trained on the previous A.I.-generated digits. What happens if this process continues? After 20 generations of training new A.I.s on their predecessors’ output, the digits blur and start to erode.

After 30 generations, they converge into a single shape.

While this is a simplified example, it illustrates a problem on the horizon.

Imagine a medical-advice chatbot that lists fewer diseases that match your symptoms, because it was trained on a narrower spectrum of medical knowledge generated by previous chatbots. Or an A.I. history tutor that ingests A.I.-generated propaganda and can no longer separate fact from fiction.

Just as a copy of a copy can drift away from the original, when generative A.I. is trained on its own content, its output can also drift away from reality, growing further apart from the original data that it was intended to imitate.

In a paper published last month in the journal Nature, a group of researchers in Britain and Canada showed how this process results in a narrower range of A.I. output over time — an early stage of what they called “model collapse.”

The eroding digits we just saw show this collapse. When untethered from human input, the A.I. output dropped in quality (the digits became blurry) and in diversity (they grew similar).

How an A.I. that draws digits “collapses” after being trained on its own output

If only some of the training data were A.I.-generated, the decline would be slower or more subtle. But it would still occur, researchers say, unless the synthetic data was complemented with a lot of new, real data.

Degenerative A.I.

In one example, the researchers trained a large language model on its own sentences over and over again, asking it to complete the same prompt after each round.

When they asked the A.I. to complete a sentence that started with “To cook a turkey for Thanksgiving, you…,” at first, it responded like this:

Even at the outset, the A.I. “hallucinates.” But when the researchers further trained it on its own sentences, it got a lot worse…

An example of text generated by an A.I. model. After two generations, it started simply printing long lists.

An example of text generated by an A.I. model after being trained on its own sentences for 2 generations.

And after four generations, it began to repeat phrases incoherently. An example of text generated by an A.I. model after being trained on its own sentences for 4 generations.

“The model becomes poisoned with its own projection of reality,” the researchers wrote of this phenomenon.

This problem isn’t just confined to text. Another team of researchers at Rice University studied what would happen when the kinds of A.I. that generate images are repeatedly trained on their own output — a problem that could already be occurring as A.I.-generated images flood the web.

They found that glitches and image artifacts started to build up in the A.I.’s output, eventually producing distorted images with wrinkled patterns and mangled fingers.

When A.I. image models are trained on their own output, they can produce distorted images, mangled fingers or strange patterns.

A.I.-generated images by Sina Alemohammad and others.

“You’re kind of drifting into parts of the space that are like a no-fly zone,” said Richard Baraniuk, a professor who led the research on A.I. image models.

The researchers found that the only way to stave off this problem was to ensure that the A.I. was also trained on a sufficient supply of new, real data.

While selfies are certainly not in short supply on the internet, there could be categories of images where A.I. output outnumbers genuine data, they said.

For example, A.I.-generated images in the style of van Gogh could outnumber actual photographs of van Gogh paintings in A.I.’s training data, and this may lead to errors and distortions down the road. (Early signs of this problem will be hard to detect because the leading A.I. models are closed to outside scrutiny, the researchers said.)

Why collapse happens

All of these problems arise because A.I.-generated data is often a poor substitute for the real thing.

This is sometimes easy to see, like when chatbots state absurd facts or when A.I.-generated hands have too many fingers.

But the differences that lead to model collapse aren’t necessarily obvious — and they can be difficult to detect.

When generative A.I. is “trained” on vast amounts of data, what’s really happening under the hood is that it is assembling a statistical distribution — a set of probabilities that predicts the next word in a sentence, or the pixels in a picture.

For example, when we trained an A.I. to imitate handwritten digits, its output could be arranged into a statistical distribution that looks like this:

Distribution of A.I.-generated data

Examples of

initial A.I. output:

The distribution shown here is simplified for clarity.

The peak of this bell-shaped curve represents the most probable A.I. output — in this case, the most typical A.I.-generated digits. The tail ends describe output that is less common.

Notice that when the model was trained on human data, it had a healthy spread of possible outputs, which you can see in the width of the curve above.

But after it was trained on its own output, this is what happened to the curve:

Distribution of A.I.-generated data when trained on its own output

It gets taller and narrower. As a result, the model becomes more and more likely to produce a smaller range of output, and the output can drift away from the original data.

Meanwhile, the tail ends of the curve — which contain the rare, unusual or surprising outcomes — fade away.

This is a telltale sign of model collapse: Rare data becomes even rarer.

If this process went unchecked, the curve would eventually become a spike:

Distribution of A.I.-generated data when trained on its own output

This was when all of the digits became identical, and the model completely collapsed.

Why it matters

This doesn’t mean generative A.I. will grind to a halt anytime soon.

The companies that make these tools are aware of these problems, and they will notice if their A.I. systems start to deteriorate in quality.

But it may slow things down. As existing sources of data dry up or become contaminated with A.I. “slop,” researchers say it makes it harder for newcomers to compete.

A.I.-generated words and images are already beginning to flood social media and the wider web. They’re even hiding in some of the data sets used to train A.I., the Rice researchers found.

“The web is becoming increasingly a dangerous place to look for your data,” said Sina Alemohammad, a graduate student at Rice who studied how A.I. contamination affects image models.

Big players will be affected, too. Computer scientists at N.Y.U. found that when there is a lot of A.I.-generated content in the training data, it takes more computing power to train A.I. — which translates into more energy and more money.

“Models won’t scale anymore as they should be scaling,” said Julia Kempe, the N.Y.U. professor who led this work.

The leading A.I. models already cost tens to hundreds of millions of dollars to train, and they consume staggering amounts of energy, so this can be a sizable problem.

‘A hidden danger’

Finally, there’s another threat posed by even the early stages of collapse: an erosion of diversity.

And it’s an outcome that could become more likely as companies try to avoid the glitches and “hallucinations” that often occur with A.I. data.

This is easiest to see when the data matches a form of diversity that we can visually recognize — people’s faces:

This set of A.I. faces was created by the same Rice researchers who produced the distorted faces above. This time, they tweaked the model to avoid visual glitches.

A grid of A.I.-generated faces showing variations in their poses, expressions, ages and races.

This is the output after they trained a new A.I. on the previous set of faces. At first glance, it may seem like the model changes worked: The glitches are gone.

After one generation of training on A.I. output, the A.I.-generated faces appear more similar.

After two generations …

After two generations of training on A.I. output, the A.I.-generated faces are less diverse than the original image.

After three generations …

After three generations of training on A.I. output, the A.I.-generated faces grow more similar.

After four generations, the faces all appeared to converge.

After four generations of training on A.I. output, the A.I.-generated faces appear almost identical.

This drop in diversity is “a hidden danger,” Mr. Alemohammad said. “You might just ignore it and then you don’t understand it until it’s too late.”

Just as with the digits, the changes are clearest when most of the data is A.I.-generated. With a more realistic mix of real and synthetic data, the decline would be more gradual.

But the problem is relevant to the real world, the researchers said, and will inevitably occur unless A.I. companies go out of their way to avoid their own output.

Related research shows that when A.I. language models are trained on their own words, their vocabulary shrinks and their sentences become less varied in their grammatical structure — a loss of “linguistic diversity.”

And studies have found that this process can amplify biases in the data and is more likely to erase data pertaining to minorities.

Ways out

Perhaps the biggest takeaway of this research is that high-quality, diverse data is valuable and hard for computers to emulate.

One solution, then, is for A.I. companies to pay for this data instead of scooping it up from the internet, ensuring both human origin and high quality.

OpenAI and Google have made deals with some publishers or websites to use their data to improve A.I. (The New York Times sued OpenAI and Microsoft last year, alleging copyright infringement. OpenAI and Microsoft say their use of the content is considered fair use under copyright law.)

Better ways to detect A.I. output would also help mitigate these problems.

Google and OpenAI are working on A.I. “watermarking” tools, which introduce hidden patterns that can be used to identify A.I.-generated images and text.

But watermarking text is challenging, researchers say, because these watermarks can’t always be reliably detected and can easily be subverted (they may not survive being translated into another language, for example).

A.I. slop is not the only reason that companies may need to be wary of synthetic data. Another problem is that there are only so many words on the internet.

Some experts estimate that the largest A.I. models have been trained on a few percent of the available pool of text on the internet. They project that these models may run out of public data to sustain their current pace of growth within a decade.

“These models are so enormous that the entire internet of images or conversations is somehow close to being not enough,” Professor Baraniuk said.

To meet their growing data needs, some companies are considering using today’s A.I. models to generate data to train tomorrow’s models. But researchers say this can lead to unintended consequences (such as the drop in quality or diversity that we saw above).

There are certain contexts where synthetic data can help A.I.s learn — for example, when output from a larger A.I. model is used to train a smaller one, or when the correct answer can be verified, like the solution to a math problem or the best strategies in games like chess or Go.

And new research suggests that when humans curate synthetic data (for example, by ranking A.I. answers and choosing the best one), it can alleviate some of the problems of collapse.

Companies are already spending a lot on curating data, Professor Kempe said, and she believes this will become even more important as they learn about the problems of synthetic data.

But for now, there’s no replacement for the real thing.

About the data

To produce the images of A.I.-generated digits, we followed a procedure outlined by researchers. We first trained a type of a neural network known as a variational autoencoder using a standard data set of 60,000 handwritten digits. We then trained a new neural network using only the A.I.-generated digits produced by the previous neural network, and repeated this process in a loop 30 times.

To create the statistical distributions of A.I. output, we used each generation’s neural network to create 10,000 drawings of digits. We then used the first neural network (the one that was trained on the original handwritten digits) to encode these drawings as a set of numbers, known as a “latent space” encoding. This allowed us to quantitatively compare the output of different generations of neural networks. For simplicity, we used the average value of this latent space encoding to generate the statistical distributions shown in the article.

Science

Diablo Canyon clears last California permit hurdle to keep running

Central Coast Water authorities approved waste discharge permits for Diablo Canyon nuclear plant Thursday, making it nearly certain it will remain running through 2030, and potentially through 2045.

The Pacific Gas & Electric-owned plant was originally supposed to shut down in 2025, but lawmakers extended that deadline by five years in 2022, fearing power shortages if a plant that provides about 9 percent the state’s electricity were to shut off.

In December, Diablo Canyon received a key permit from the California Coastal Commission through an agreement that involved PG&E giving up about 12,000 acres of nearby land for conservation in exchange for the loss of marine life caused by the plant’s operations.

Today’s 6-0 vote by the Central Coast Regional Water Board approved PG&E’s plans to limit discharges of pollutants into the water and continue to run its “once-through cooling system.” The cooling technology flushes ocean water through the plant to absorb heat and discharges it, killing what the Coastal Commission estimated to be two billion fish each year.

The board also granted the plant a certification under the Clean Water Act, the last state regulatory hurdle the facility needed to clear before the federal Nuclear Regulatory Commission (NRC) is allowed to renew its permit through 2045.

The new regional water board permit made several changes since the last one was issued in 1990. One was a first-time limit on the chemical tributyltin-10, a toxic, internationally-banned compound added to paint to prevent organisms from growing on ship hulls.

Additional changes stemmed from a 2025 Supreme Court ruling that said if pollutant permits like this one impose specific water quality requirements, they must also specify how to meet them.

The plant’s biggest water quality impact is the heated water it discharges into the ocean, and that part of the permit remains unchanged. Radioactive waste from the plant is regulated not by the state but by the NRC.

California state law only allows the plant to remain open to 2030, but some lawmakers and regulators have already expressed interest in another extension given growing electricity demand and the plant’s role in providing carbon-free power to the grid.

Some board members raised concerns about granting a certification that would allow the NRC to reauthorize the plant’s permits through 2045.

“There’s every reason to think the California entities responsible for making the decision about continuing operation, namely the California [Independent System Operator] and the Energy Commission, all of them are sort of leaning toward continuing to operate this facility,” said boardmember Dominic Roques. “I’d like us to be consistent with state law at least, and imply that we are consistent with ending operation at five years.”

Other board members noted that regulators could revisit the permits in five years or sooner if state and federal laws changes, and the board ultimately approved the permit.

Science

Deadly bird flu found in California elephant seals for the first time

The H5N1 bird flu virus that devastated South American elephant seal populations has been confirmed in seals at California’s Año Nuevo State Park, researchers from UC Davis and UC Santa Cruz announced Wednesday.

The virus has ravaged wild, commercial and domestic animals across the globe and was found last week in seven weaned pups. The confirmation came from the U.S. Department of Agriculture’s National Veterinary Services Laboratory in Ames, Iowa.

“This is exceptionally rapid detection of an outbreak in free-ranging marine mammals,” said Professor Christine Johnson, director of the Institute for Pandemic Insights at UC Davis’ Weill School of Veterinary Medicine. “We have most likely identified the very first cases here because of coordinated teams that have been on high alert with active surveillance for this disease for some time.”

Since last week, when researchers began noticing neurological and respoiratory signs of the disease in some animals, 30 seals have died, said Roxanne Beltran, a professor of ecology and evolutionary biology at UC Santa Cruz. Twenty-nine were weaned pups and the other was an adult male. The team has so far confirmed the virus in only seven of the dead pups.

Infected animals often have tremors convulsions, seizures and muscle weakness, Johnson said.

Beltran said teams from UC Santa Cruz, UC Davis and California State Parks monitor the animals 260 days of the year, “including every day from December 15 to March 1” when the animals typically come ashore to breed, give birth and nurse.

The concerning behavior and deaths were first noticed Feb. 19.

“This is one of the most well-studied elephant seal colonies on the planet,” she said. “We know the seals so well that it’s very obvious to us when something is abnormal. And so my team was out that morning and we observed abnormal behaviors in seals and increased mortality that we had not seen the day before in those exact same locations. So we were very confident that we caught the beginning of this outbreak.”

In late 2022, the virus decimated southern elephant seal populations in South America and several sub-Antarctic Islands. At some colonies in Argentina, 97% of pups died, while on South Georgia Island, researchers reported a 47% decline in breeding females between 2022 and 2024. Researchers believe tens of thousands of animals died.

More than 30,000 sea lions in Peru and Chile died between 2022 and 2024. In Argentina, roughly 1,300 sea lions and fur seals perished.

At the time, researchers were not sure why northern Pacific populations were not infected, but suspected previous or milder strains of the virus conferred some immunity.

The virus is better known in the U.S. for sweeping through the nation’s dairy herds, where it infected dozens of dairy workers, millions of cows and thousands of wild, feral and domestic mammals. It’s also been found in wild birds and killed millions of commercial chickens, geese and ducks.

Two Americans have died from the virus since 2024, and 71 have been infected. The vast majority were dairy or commercial poultry workers. One death was that of a Louisiana man who had underlying conditions and was believed to have been exposed via backyard poultry or wild birds.

Scientists at UC Santa Cruz and UC Davis increased their surveillance of the elephant seals in Año Nuevo in recent years. The catastrophic effect of the disease prompted worry that it would spread to California elephant seals, said Beltran, whose lab leads UC Santa Cruz’s northern elephant seal research program at Año Nuevo.

Johnson, the UC Davis researcher, said the team has been working with stranding networks across the Pacific region for several years — sampling the tissue of birds, elephant seals and other marine mammals. They have not seen the virus in other California marine mammals. Two previous outbreaks of bird flu in U.S. marine mammals occurred in Maine in 2022 and Washington in 2023, affecting gray and harbor seals.

The virus in the animals has not yet been fully sequenced, so it’s unclear how the animals were exposed.

“We think the transmission is actually from dead and dying sea birds” living among the sea lions, Johnson said. “But we’ll certainly be investigating if there’s any mammal-to-mammal transmission.”

Genetic sequencing from southern elephant seal populations in Argentina suggested that version of the virus had acquired mutations that allowed it to pass between mammals.

The H5N1 virus was first detected in geese in China in 1996. Since then it has spread across the globe, reaching North America in 2021. The only continent where it has not been detected is Oceania.

Año Nuevo State Park, just north of Santa Cruz, is home to a colony of some 5,000 elephant seals during the winter breeding season. About 1,350 seals were on the beach when the outbreak began. Other large California colonies are located at Piedras Blancas and Point Reyes National Sea Shore. Most of those animals — roughly 900 — are weaned pups.

It’s “important to keep this in context. So far, avian influenza has affected only a small proportion of the weaned at this time, and there are still thousands of apparently healthy animals in the population,” Beltran said in a press conference.

Public access to the park has been closed and guided elephant seal tours canceled.

Health and wildlife officials urge beachgoers to keep a safe distance from wildlife and keep dogs leashed because the virus is contagious.

Science

When slowing down can save a life: Training L.A. law enforcement to understand autism

Kate Movius moved among a roomful of Los Angeles County sheriff’s deputies, passing out a pop trivia quiz and paper prism glasses.

She told them to put on the vision-distorting glasses, and to write with their nondominant hand. As they filled out the tests, Movius moved about the City of Industry classroom pounding abruptly on tables. Then came the cowbell. An aide flashed the overhead lights on and off at random. The goal was to help the deputies understand the feeling of sensory overwhelm, which many autistic people experience when incoming stimulation exceeds their capacity to process.

“So what can you do to assist somebody, or de-escalate somebody, or get information from someone who suffers from a sensory disorder?” Movius asked the rattled crowd afterward. “We can minimize sensory input. … That might be the difference between them being able to stay calm and them taking off.”

Movius, founder of the consultancy Autism Interaction Solutions, is one of a growing number of people around the U.S. working to teach law enforcement agencies to recognize autistic behaviors and ensure that encounters between neurodevelopmentally disabled people and law enforcement end safely.

She and City of Industry Mayor Cory Moss later passed out bags filled with tools donated by the city to aid interactions: a pair of noise-damping headphones to decrease auditory input, a whiteboard, a set of communication cards with words and images to point to, fidget toys to calm and distract.

“The thing about autistic behavior when it comes to law enforcement is a lot of it may look suspicious, and a lot of it may feel very disrespectful,” said Movius, who is also the parent of an autistic 25-year-old man. Responding officers, she said, “are not coming in thinking, ‘Could this be a developmentally disabled person?’ I would love for them to have that in the back of their minds.”

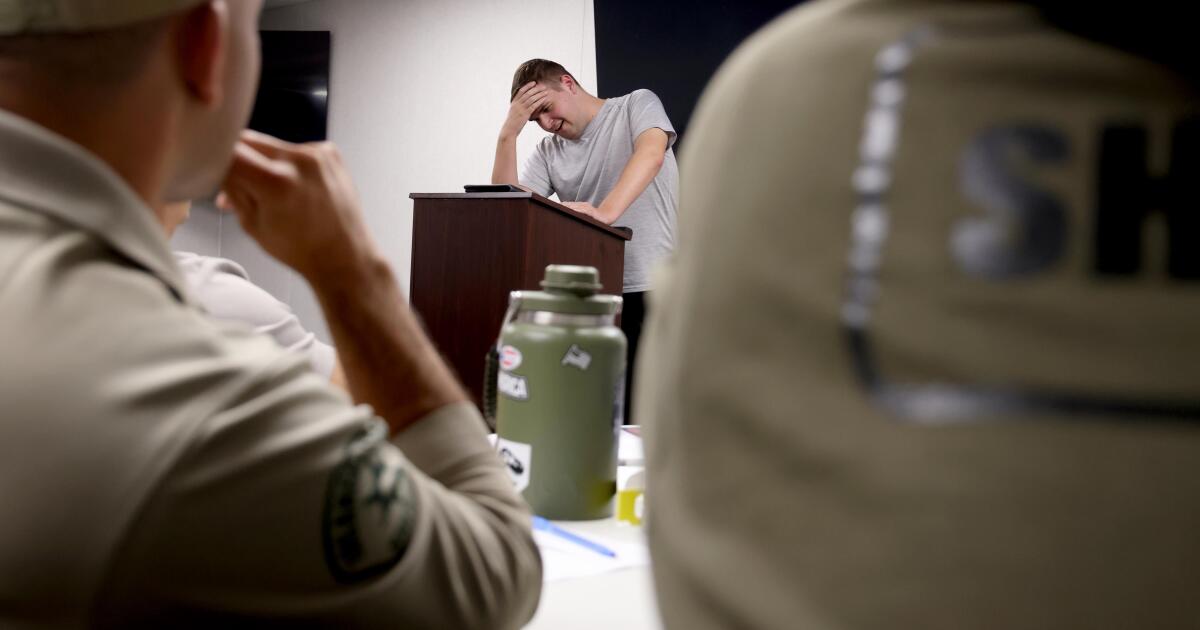

A sheriff’s deputy reads a pamphlet on autism during the training program.

(Genaro Molina / Los Angeles Times)

Autism spectrum disorder is a developmental condition that manifests differently in nearly every person who has it. Symptoms cluster around difficulties in communication, social interaction and sensory processing.

An autistic person stopped by police might hold the officer’s gaze intensely or not look at them at all. They may repeat a phrase from a movie, repeat the officer’s question or temporarily lose their ability to speak. They might flee.

All are common involuntary responses for an autistic person in a stressful situation, which a sudden encounter with law enforcement almost invariably is. To someone unfamiliar with the condition, all could be mistaken for intoxication, defiance or guilt.

Autism rates in the U.S. have increased nearly fivefold since the Centers for Disease Control began tracking diagnoses in 2000, a rise experts attribute to broadening diagnostic criteria and better efforts to identify children who have the condition.

The CDC now estimates that 1 in 31 U.S. 8-year-olds is autistic. In California, the rate is closer to 1 in 22 children.

As diverse as the autistic population is, people across the spectrum are more likely to be stopped by law enforcement than neurotypical peers.

About 15% of all people in the U.S. ages 18 to 24 have been stopped by police at some point in their lives, according to federal data. While the government doesn’t track encounters for disabled people specifically, a separate study found that 20% of autistic people ages 21 to 25 have been stopped, often after a report or officer observation of a person behaving unusually.

Some of these encounters have ended in tragedy.

In 2021, Los Angeles County sheriff’s deputies shot and permanently paralyzed a deaf autistic man after family members called 911 for help getting him to a hospital.

Isaias Cervantes, 25, had become distressed about a shopping trip and started pushing his mother, his family’s attorney said at the time. He resisted as two deputies attempted to handcuff him and one of the deputies shot him, according to a county report.

In 2024, Ryan Gainer’s family called 911 for support when the 15-year-old became agitated. Responding San Bernardino County sheriff‘s deputies shot and killed him outside his Apple Valley home.

Last year, police in Pocatello, Idaho, shot Victor Perez, 17, through a chain-link fence after the nonspeaking teenager did not heed their shouted commands. He died from his injuries in April.

Sheriff’s deputies take a trivia quiz using their non-writing hands, while wearing vision-distorting glasses, as Kate Movius, standing left, and Industry Mayor Cory Moss, right, ring cowbells. The idea was to help them understand the sensory overwhelm some autistic people experience.

(Genaro Molina / Los Angeles Times)

As early as 2001, the FBI published a bulletin on police officers’ need to adjust their approach when interacting with autistic people.

“Officers should not interpret an autistic individual’s failure to respond to orders or questions as a lack of cooperation or as a reason for increased force,” the bulletin stated. “They also need to recognize that individuals with autism often confess to crimes that they did not commit or may respond to the last choice in a sequence presented in a question.”

But a review of multiple studies last year by Chapman University researchers found that while up to 60% of officers have been on a call involving an autistic person, only 5% to 40% had received any training on autism.

In response, universities, nonprofits and private consultants across the U.S. have developed curricula for law enforcement on how to recognize autistic behaviors and adapt accordingly.

The primary goal, Movius told deputies at November’s training session, is to slow interactions down to the greatest extent possible. Many autistic people require additional time to process auditory input and verbal responses, particularly in unfamiliar circumstances.

If at all possible, Movius said, wait 20 seconds for a response after asking a question. It may feel unnaturally long, she acknowledged. But every additional question or instruction fired in that time — what’s your name? Did you hear me? Look at me. What’s your name? — just decreases the likelihood that a person struggling to process will be able to respond at all.

Moss’ son, Brayden, then 17, was one of several teenagers and young adults with autism who spoke or wrote statements to be read to the deputies. The diversity of their speech patterns and physical mannerisms showed the breadth of the spectrum. Some were fluently verbal, while others communicated through signs and notes.

“This population is so diverse. It is so complicated. But if there’s anything that we can show [deputies] in here that will make them stop and think, ‘Hey, what if this is autism?’ … it is saving lives,” Moss said.

Mayor Cory Moss, left, and Kate Movius hug at the end of the training program last November. Movius started Autism Interaction Solutions after her son was born with profound autism.

(Genaro Molina / Los Angeles Times)

Some disability advocates cautioned that it takes more than isolated training sessions to ensure encounters end safely.

Judy Mark, co-founder and president of the nonprofit Disability Voices United, says she trained thousands of officers on safe autism interactions but stopped after Cervantes’ shooting. She now urges families concerned about an autistic child’s safety to call an ambulance rather than law enforcement.

“I have significant concern about these training sessions,” Mark said. “People get comfort from it, and the Sheriff’s Department can check the box.”

While not a panacea, supporters argue that a brief course is better than no preparation at all. Some years ago, Movius received a letter from a man whose profoundly autistic son slipped away as the family loaded their car at the beach. He opened the unlocked door of a police vehicle, climbed into the back and began to flail in distress.

Though surprised, the officer seated at the wheel de-escalated the situation and helped the young man find his family, the father wrote to Movius. He had just been to her training.

-

World1 day ago

World1 day agoExclusive: DeepSeek withholds latest AI model from US chipmakers including Nvidia, sources say

-

Massachusetts2 days ago

Massachusetts2 days agoMother and daughter injured in Taunton house explosion

-

Montana1 week ago

Montana1 week ago2026 MHSA Montana Wrestling State Championship Brackets And Results – FloWrestling

-

Oklahoma1 week ago

Oklahoma1 week agoWildfires rage in Oklahoma as thousands urged to evacuate a small city

-

Louisiana4 days ago

Louisiana4 days agoWildfire near Gum Swamp Road in Livingston Parish now under control; more than 200 acres burned

-

Technology6 days ago

Technology6 days agoYouTube TV billing scam emails are hitting inboxes

-

Denver, CO2 days ago

Denver, CO2 days ago10 acres charred, 5 injured in Thornton grass fire, evacuation orders lifted

-

Technology6 days ago

Technology6 days agoStellantis is in a crisis of its own making