Business

Column: Two key antiabortion studies have been retracted as junk science. Will the Supreme Court care?

If the effort to ban medication abortion now before the Supreme Court demonstrates anything, it’s that the damage caused in our society by junk science can be disastrous indeed.

That’s the implication of the retraction of two scientific studies, announced Monday by the journal publisher Sage. The studies provided the purported rationale for a Texas federal judge’s ruling overturning the approval of the abortion drugs by the Food and Drug Administration.

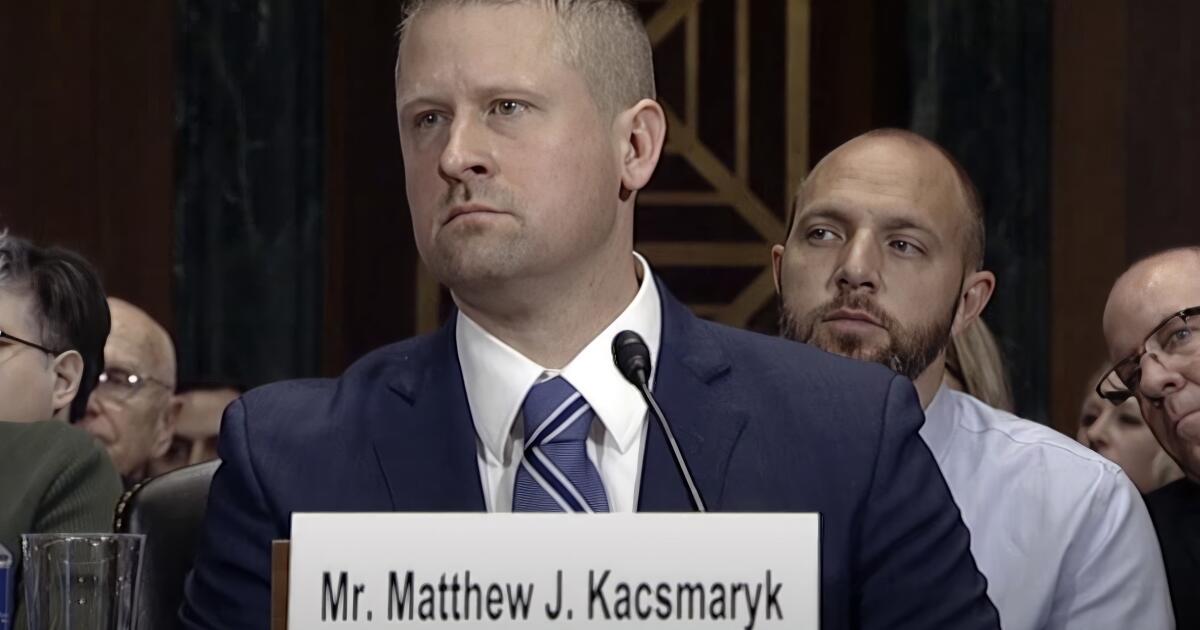

It’s impossible to overstate the potential ramifications of the ruling issued April 7 by federal Judge Matthew Kacsmaryk of Amarillo, Texas, which invalidated FDA approvals of the drug mifepristone dating back to 2000.

Experts identified…unjustified or incorrect factual assumptions, material errors in the authors’ analysis of the data, and misleading presentations of the data that…demonstrate a lack of scientific rigor and invalidate the authors’ conclusions in whole or in part.

— Retraction notice of research on mifepristone

Kacsmaryk’s ruling was the basis for an outstandingly loopy decision by the U.S. 5th Circuit Court of Appeals on Aug. 19, which narrowed his ruling somewhat but not entirely. The Supreme Court has scheduled oral arguments on the case for March 26.

The worst-case scenario is that the Supreme Court will follow Kacsmaryk in revoking the FDA’s approval. That would block access to what has become the most common abortion method in the U.S. Providers would have to shift to other medications that are not as effective as mifepristone.

The court could also narrow the reach of the FDA’s actions in 2000, when it declared mifepristone safe and effective, and in 2016 and 2021, when it allowed patients to order the drug online and receive it by mail or from pharmacies rather than at doctors’ offices.

The court could restore previous rules limiting the use of mifepristone to the first seven weeks of gestation instead of the current 10 weeks. It could require that it be administered only through a physician’s prescription and only directly by doctors.

The damage could go further. An expansive Supreme Court ruling could cripple the FDA’s authority to determine the safety and efficacy of drugs and subject its judgments to increasingly partisan challenges. It could bring an antique, long-disregarded 150-year-old antipornography law to legal prominence.

Let’s start at the beginning, with Kacsmaryk’s 2023 ruling. As I’ve reported in the past, Kacsmaryk’s jurisprudence has been a blot on the judicial system since he joined the court in 2019 as a Trump appointee. Kacsmaryk is the only federal judge in the Amarillo district of the federal court in the Northern District of Texas.

That has made his courthouse a favored venue for right-wing litigants. A former functionary of a conservative Christian legal group, he has been a dependable foe of efforts to protect LGBTQ+ legal rights and access to contraceptives.

His record made him the ideal judge for a coalition of antiabortion groups including the American Assn. of Pro-Life Obstetricians & Gynrecologists and the Christian Medical & Dental Associations waging an attack on medication abortion.

Kacsmaryk’s April 7 ruling bristled with antiabortion terminology such as the terms “unborn human” and “unborn child”; abortion providers were labeled “abortionists.” By contrast, a ruling protecting access to mifepristone issued the same day by federal Judge Thomas Owens Rice of Washington state, an Obama appointee, used neutral language, such as a reference to “institutions and providers who provide abortion care.”

Kacsmaryk accepted common talking points of the antiabortion movement as legal conclusions. He cited the Comstock Act, an antipornography law enacted in 1873, no fewer than 29 times. He accepted as read the antiabortion movement’s contention that it barred the shipment of mifepristone through the U.S. mail, even though federal courts had rejected that interpretation for more than 100 years.

He questioned the FDA’s judgment that the drug was safe and effective, despite overwhelming evidence to the contrary. The core of Kacsmaryk’s findings questioning the FDA’s approval of the drug came from two studies led by James Studnicki, director of data analytics at the Charlotte Lozier Institute, which says in the mission statement on its website that it “advises and leads the pro-life movement with groundbreaking scientific, statistical, and medical research.”

Among the institute’s principal aims is “to warn women about the dangers of chemical abortion and expose the harms of the FDA’s current abortion pill policy that simply ignores the known risks.”

Kacsmaryk cited the Studnicki papers to endorse the plaintiff organizations’ conclusions that adverse reactions to mifepristone could “overwhelm the medical system and place ‘enormous pressure and stress’” on doctors due to “significant complications requiring medical attention,” and that women taking the drug were reporting to emergency rooms at much greater rates than those who had undergone surgical abortions.

Sage’s retraction notice explodes those claims. The papers were published in 2021 and 2022 in Sage’s Health Services Research and Managerial Epidemiology journal. (A third Studnicki paper published in 2019 but not cited by Kacsmaryk was also retracted.)

Sage’s inquiry was triggered by Chris Adkins, a pharmaceutical sciences professor at South University School of Pharmacy in Savannah, Ga.

Among the flaws Adkins pointed to was that one study appeared to inflate claims about adverse reactions to the drug by failing to distinguish ER visits for routine complaints from those due to the drug. Nor did the Studnicki research factor in the increases in medication abortions starting in 2000 or the increase in Medicaid enrollments in the same period, which was a factor in the growth of medication abortions.

Sage said that in its pre-retraction review, “experts identified fundamental problems with the design … and methodology” of the questioned papers, as well as “unjustified or incorrect factual assumptions, material errors in the authors’ analysis of the data, and misleading presentations of the data that … demonstrate a lack of scientific rigor and invalidate the authors’ conclusions in whole or in part.”

Sage also noted that the papers declared that the authors had no conflicts of interest in researching and writing the papers. In fact, all but one of the authors of the studies Kacsmaryk cited were affiliated with the Charlotte Lozier Institute, the American Assn. of Pro-Life Obstetricians and Gynecologists, or the Elliot Institute, which are antiabortion advocacy organizations. Although the authors had disclosed their affiliations, Sage reported, they had not acknowledged that these posed a conflict.

Studnicki objects to the retractions, responding that the action is “unjustified” and that his data are “accurately reported.”

Kacsmaryk’s ruling has caused immense confusion in the administration of mifepristone. The 5th Circuit appeals court overturned his rejection of the FDA’s original 2000 conclusion that mifepristone is safe and effective, but upheld his overturning of the FDA’s loosening of restrictions on the use of the drug issued in 2016 and 2021. It stayed injunctions on those uses until the Supreme Court rules, however.

The appeals court opinion featured one of the more curious flights of fancy by a federal judge — a separate opinion by Appellate Justice James C. Ho, another Trump appointee. He advocated overturning the 2000 FDA approval as well as the 2016 and 2021 revisions, on the grounds that abortions cause “aesthetic injury” to doctors forced to participate in the procedure, even if only by treating patients for adverse reactions.

“Unborn babies are a source of profound joy for those who view them,” Ho wrote. “Doctors delight in working with their unborn patients — and experience an aesthetic injury when they are aborted.”

The real injury that could arise from the Supreme Court’s consideration of mifepristone would be to the use of science to validate judicial opinions by substituting junk science for rigorous research.

More than 20 years of medical practice has established that the drug is safe and effective for its purpose — indeed, safer than many other drugs in common use in the U.S. Revoking its approval would be based on no scientific evidence at all, only on politics. And that won’t be good for anyone.

Business

A new delivery bot is coming to L.A., built stronger to survive in these streets

The rolling robots that deliver groceries and hot meals across Los Angeles are getting an upgrade.

Coco Robotics, a UCLA-born startup that’s deployed more than 1,000 bots across the country, unveiled its next-generation machines on Thursday.

The new robots are bigger, tougher and better equipped for autonomy than their predecessors. The company will use them to expand into new markets and increase its presence in Los Angeles, where it makes deliveries through a partnership with DoorDash.

Dubbed Coco 2, the next-gen bots have upgraded cameras and front-facing lidar, a laser-based sensor used in self-driving cars. They will use hardware built by Nvidia, the Santa Clara-based artificial intelligence chip giant.

Coco co-founder and chief executive Zach Rash said Coco 2 will be able to make deliveries even in conditions unsafe for human drivers. The robot is fully submersible in case of flooding and is compatible with special snow tires.

Zach Rash, co-founder and CEO of Coco, opens the top of the new Coco 2 (Next-Gen) at the Coco Robotics headquarters in Venice.

(Kayla Bartkowski/Los Angeles Times)

Early this month, a cute Coco was recorded struggling through flooded roads in L.A.

“She’s doing her best!” said the person recording the video. “She is doing her best, you guys.”

Instagram followers cheered the bot on, with one posting, “Go coco, go,” and others calling for someone to help the robot.

“We want it to have a lot more reliability in the most extreme conditions where it’s either unsafe or uncomfortable for human drivers to be on the road,” Rash said. “Those are the exact times where everyone wants to order.”

The company will ramp up mass production of Coco 2 this summer, Rash said, aiming to produce 1,000 bots each month.

The design is sleek and simple, with a pink-and-white ombré paint job, the company’s name printed in lowercase, and a keypad for loading and unloading the cargo area. The robots have four wheels and a bigger internal compartment for carrying food and goods .

Many of the bots will be used for expansion into new markets across Europe and Asia, but they will also hit the streets in Los Angeles and operate alongside the older Coco bots.

Coco has about 300 bots in Los Angeles already, serving customers from Santa Monica and Venice to Westwood, Mid-City, West Hollywood, Hollywood, Echo Park, Silver Lake, downtown, Koreatown and the USC area.

The new Coco 2 (Next-Gen) drives along the sidewalk at the Coco Robotics headquarters in Venice.

(Kayla Bartkowski/Los Angeles Times)

The company is in discussion with officials in Culver City, Long Beach and Pasadena about bringing autonomous delivery to those communities.

There’s also been demand for the bots in Studio City, Burbank and the San Fernando Valley, according to Rash.

“A lot of the markets that we go into have been telling us they can’t hire enough people to do the deliveries and to continue to grow at the pace that customers want,” Rash said. “There’s quite a lot of area in Los Angeles that we can still cover.”

The bots already operate in Chicago, Miami and Helsinki, Finland. Last month, they arrived in Jersey City, N.J.

Late last year, Coco announced a partnership with DashMart, DoorDash’s delivery-only online store. The partnership allows Coco bots to deliver fresh groceries, electronics and household essentials as well as hot prepared meals.

With the release of Coco 2, the company is eyeing faster deliveries using bike lanes and road shoulders as opposed to just sidewalks, in cities where it’s safe to do so. Coco 2 can adapt more quickly to new environments and physical obstacles, the company said.

Zach Rash, co-founder and CEO of Coco.

(Kayla Bartkowski/Los Angeles Times)

Coco 2 is designed to operate autonomously, but there will still be human oversight in case the robot runs into trouble, Rash said. Damaged sidewalks or unexpected construction can stop a bot in its tracks.

The need for human supervision has created a new field of jobs for Angelenos.

Though there have been reports of pedestrians bullying the robots by knocking them over or blocking their path, Rash said the community response has been overall positive. The bots are meant to inspire affection.

“One of the design principles on the color and the name and a lot of the branding was to feel warm and friendly to people,” Rash said.

Coco plans to add thousands of bots to its fleet this year. The delivery service got its start as a dorm room project in 2020, when Rash was a student at UCLA. He co-founded the company with fellow student Brad Squicciarini.

The Santa Monica-based company has completed more than 500,000 zero-emission deliveries and its bots have collectively traveled around 1 million miles.

Coco chooses neighborhoods to deploy its bots based on density, prioritizing areas with restaurants clustered together and short delivery distances as well as places where parking is difficult.

The robots can relieve congestion by taking cars and motorbikes off the roads. Rash said there is so much demand for delivery services that the company’s bots are not taking jobs from human drivers.

Instead, Coco can fill gaps in the delivery market while saving merchants money and improving the safety of city streets.

“This vehicle is inherently a lot safer for communities than a car,” Rash said. “We believe our vehicles can operate the highest quality of service and we can do it at the lowest price point.”

Business

Trump orders federal agencies to stop using Anthropic’s AI after clash with Pentagon

President Trump on Friday directed federal agencies to stop using technology from San Francisco artificial intelligence company Anthropic, escalating a high-profile clash between the AI startup and the Pentagon over safety.

In a Friday post on the social media site Truth Social, Trump described the company as “radical left” and “woke.”

“We don’t need it, we don’t want it, and will not do business with them again!” Trump said.

The president’s harsh words mark a major escalation in the ongoing battle between some in the Trump administration and several technology companies over the use of artificial intelligence in defense tech.

Anthropic has been sparring with the Pentagon, which had threatened to end its $200-million contract with the company on Friday if it didn’t loosen restrictions on its AI model so it could be used for more military purposes. Anthropic had been asking for more guarantees that its tech wouldn’t be used for surveillance of Americans or autonomous weapons.

The tussle could hobble Anthropic’s business with the government. The Trump administration said the company was added to a sweeping national security blacklist, ordering federal agencies to immediately discontinue use of its products and barring any government contractors from maintaining ties with it.

Defense Secretary Pete Hegseth, who met with Anthropic’s Chief Executive Dario Amodei this week, criticized the tech company after Trump’s Truth Social post.

“Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon,” he wrote Friday on social media site X.

Anthropic didn’t immediately respond to a request for comment.

Anthropic announced a two-year agreement with the Department of Defense in July to “prototype frontier AI capabilities that advance U.S. national security.”

The company has an AI chatbot called Claude, but it also built a custom AI system for U.S. national security customers.

On Thursday, Amodei signaled the company wouldn’t cave to the Department of Defense’s demands to loosen safety restrictions on its AI models.

The government has emphasized in negotiations that it wants to use Anthropic’s technology only for legal purposes, and the safeguards Anthropic wants are already covered by the law.

Still, Amodei was worried about Washington’s commitment.

“We have never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner,” he said in a blog post. “However, in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values.”

Tech workers have backed Anthropic’s stance.

Unions and worker groups representing 700,000 employees at Amazon, Google and Microsoft said this week in a joint statement that they’re urging their employers to reject these demands as well if they have additional contracts with the Pentagon.

“Our employers are already complicit in providing their technologies to power mass atrocities and war crimes; capitulating to the Pentagon’s intimidation will only further implicate our labor in violence and repression,” the statement said.

Anthropic’s standoff with the U.S. government could benefit its competitors, such as Elon Musk’s xAI or OpenAI.

Sam Altman, chief executive of OpenAI, the company behind ChatGPT and one of Anthropic’s biggest competitors, told CNBC in an interview that he trusts Anthropic.

“I think they really do care about safety, and I’ve been happy that they’ve been supporting our war fighters,” he said. “I’m not sure where this is going to go.”

Anthropic has distinguished itself from its rivals by touting its concern about AI safety.

The company, valued at roughly $380 billion, is legally required to balance making money with advancing the company’s public benefit of “responsible development and maintenance of advanced AI for the long-term benefit of humanity.”

Developers, businesses, government agencies and other organizations use Anthropic’s tools. Its chatbot can generate code, write text and perform other tasks. Anthropic also offers an AI assistant for consumers and makes money from paid subscriptions as well as contracts. Unlike OpenAI, which is testing ads in ChatGPT, Anthropic has pledged not to show ads in its chatbot Claude.

The company has roughly 2,000 employees and has revenue equivalent to about $14 billion a year.

Business

Video: The Web of Companies Owned by Elon Musk

new video loaded: The Web of Companies Owned by Elon Musk

By Kirsten Grind, Melanie Bencosme, James Surdam and Sean Havey

February 27, 2026

-

World3 days ago

World3 days agoExclusive: DeepSeek withholds latest AI model from US chipmakers including Nvidia, sources say

-

Massachusetts3 days ago

Massachusetts3 days agoMother and daughter injured in Taunton house explosion

-

Montana1 week ago

Montana1 week ago2026 MHSA Montana Wrestling State Championship Brackets And Results – FloWrestling

-

Louisiana5 days ago

Louisiana5 days agoWildfire near Gum Swamp Road in Livingston Parish now under control; more than 200 acres burned

-

Denver, CO3 days ago

Denver, CO3 days ago10 acres charred, 5 injured in Thornton grass fire, evacuation orders lifted

-

Technology1 week ago

Technology1 week agoYouTube TV billing scam emails are hitting inboxes

-

Technology1 week ago

Technology1 week agoStellantis is in a crisis of its own making

-

Politics1 week ago

Politics1 week agoOpenAI didn’t contact police despite employees flagging mass shooter’s concerning chatbot interactions: REPORT