Business

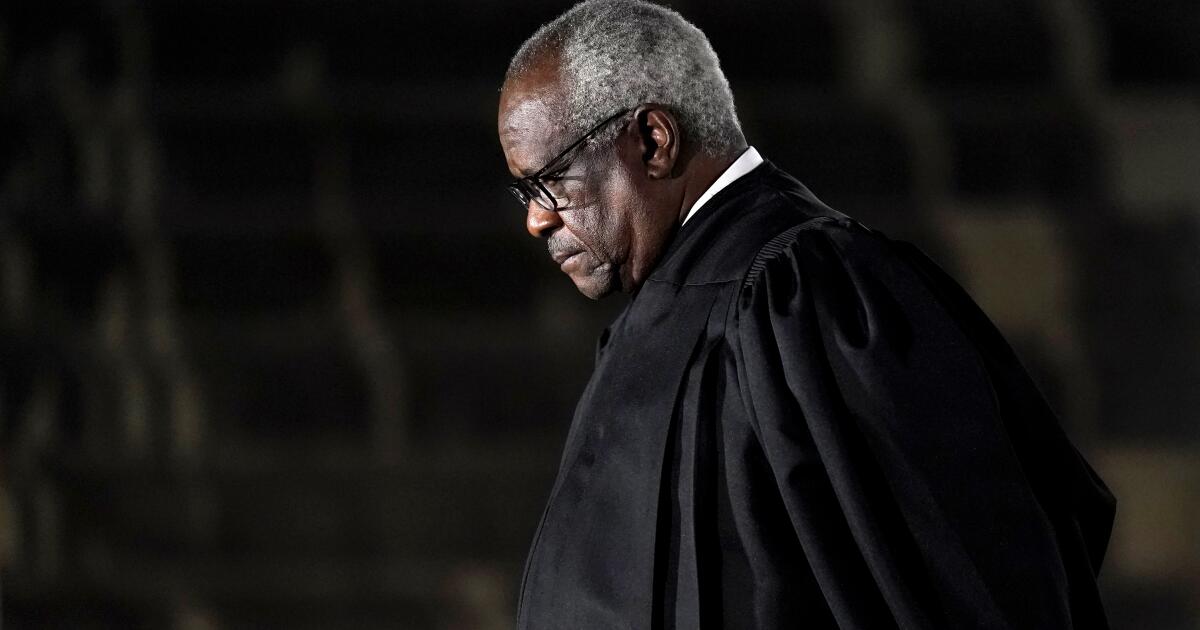

Column: Clarence Thomas and the bottomless self-pity of the upper classes

Articles asking us to feel sympathy for families barely scraping by on healthy six-figure incomes may be staples of the financial press, but it’s rare that they come packaged as real-world case studies attached to flesh-and-blood individuals.

But that’s what happened just before Christmas, when law professor Steven Calabresi defended Supreme Court Justice Clarence Thomas’ shadowy financial relationships with a passel of conservative billionaires by explaining that Thomas simply was trying to avoid the difficulty of surviving on his government salary of $285,400 a year.

“If Congress had adjusted for inflation the salary that Supreme Court justices made in 1969 at the end of the Warren Court, Justice Thomas would be being paid $500,000 a year,” Calabresi wrote, “and he would not need to rely as much as he has on gifts from wealthy friends.”

That’s a novel definition of “neediness”: Calabresi was saying that Thomas had no choice but to create an ethical quandary for himself by accepting gifts from “friends,” some of whom have interests directly or indirectly connected with cases before the Supreme Court and on which Thomas has ruled.

If Congress had adjusted for inflation the salary that Supreme Court justices made in 1969…, Justice Thomas would be being paid $500,000 a year, and he would not need to rely as much as he has on gifts from wealthy friends.

— Steven Calabresi, Reason Magazine

Given these ethical issues, Calabresi’s argument attracted some sarcasm. University of Colorado law professor Paul Campos interpreted its gist as: “It’s just fundamentally unreasonable to expect a SCOTUS justice to scrape along on nearly $300K per year in salary, without expecting that he’ll accept a petit cadeau or thirty, from billionaires who just can’t stand the sight of so much human suffering.”

Still, it’s useful to view the argument in the context of our never-ending debate about income and wealth in America. The debate regularly generates articles purporting to explain how outwardly wealthy families can’t make ends meet on income even as high as $500,000.

There was a noticeable surge in the genre in late 2020, when then-presidential candidate Joe Biden said he would guarantee no tax increases for households collecting less than $400,000. His definition of that income as the threshold of “wealthy” elicited instant pushback from writers arguing that it was no such thing.

As I’ve pointed out before, accounts of the penuriousness of life on such an income invariably involve financial legerdemain. The expense budgets published with these articles generally place the subject households in the costliest neighborhoods in the country, such as in San Francisco or Manhattan.

They also describe as necessary or unavoidable expenses many items that most ordinary families would consider luxuries. An article tied to Biden’s $400,000 promise, for instance, showed how its hypothetical family with that much income ended the year with only $34 on hand to cover “miscellaneous” expenses.

Along the way, however, the emblematic couple (two lawyers with two kids) paid $39,000 into their 401(k) retirement plans, $18,000 into 529 savings plans for college, and more than $100,000 on the mortgage and property taxes on their $2-million home. Also, food with “regular food delivery,” life insurance, weekend getaways, clothes and personal care products.

Calabresi’s hand-wringing on Thomas’ behalf also engages in sleight of hand. He doesn’t mention that Thomas’ wife, Ginni, has her own career as a lawyer and consultant, though her income is unknown. (Thomas listed her employment on his most recent financial disclosure statement, but not her salary and benefits.)

Nor does Calabresi acknowledge that much of the gifting from wealthy friends on which Thomas purportedly “needs to rely” has had nothing to do with meeting the rigors of daily life as the average person would imagine them.

As ProPublica reported, they included “at least 38 destination vacations, including a previously unreported voyage on a yacht around the Bahamas; 26 private jet flights, plus an additional eight by helicopter; a dozen VIP passes to professional and college sporting events, typically perched in the skybox; two stays at luxury resorts in Florida and Jamaica; and one standing invitation to an uber-exclusive golf club overlooking the Atlantic coast.”

To Calabresi, the questioning of this largess by the “left wing” is “sickening and unfair,” since in his view Thomas is “the best and most incorruptible Supreme Court justice in U.S. history.” Your mileage may vary; the overall tone of Calabresi’s piece is reminiscent of the line, “Raymond Shaw is the kindest, bravest, warmest, most wonderful human being I’ve ever known in my life,” uttered repeatedly in the movie “The Manchurian Candidate.”

It’s worth noting that in the movie, the line is spoken by soldiers who were brainwashed at a North Korean prison camp. Just saying.

Calabresi benchmarked Thomas’ salary against those of law school deans or young lawyers with sterling credentials such as former Supreme Court clerks, which he placed at about $500,000 a year. But discussions turning on the relative pay of various jobs and professions always have an otherworldly, even absurd, feel.

In part that’s because it’s harder than you might think to compare the work of a Supreme Court justice to that of a law school dean — not to mention comparing the work of a justice to that of a merchandise picker in an Amazon warehouse.

As Campos observes, Supreme Court justices have lifetime sinecures (only one has ever been removed through impeachment), a lifetime pension at full pay after retirement, a huge professional bureaucracy to lean on, an annual three-month paid vacation and the “psychic benefit” of being endlessly praised for their perspicacity, wisdom and (to cite Calabresi) incorruptibility. Law school deans and lawyers can’t match those bennies.

For further perspective, the federal minimum wage has been frozen at $7.25 an hour since July 2009. In that time span, its purchasing power has fallen to $5.08. In the same period, the salary of Supreme Court justices has risen to $285,400 from $213,900, an increase of 33.4%.

That may not have quite kept up with inflation, which would have raised the justices’ pay to about $311,060 since 2009, but it’s not anything like the march backward experienced by those on the federal minimum wage.

It’s true that representatives and senators also haven’t received a pay raise since 2009, but they’re not exactly living on the minimum wage: The salaries for rank-and-file legislators is $174,000 but the majority and minority leaders of both chambers and the Senate president pro tem get $193,400 and the House speaker gets $223,500.

They also pay into and receive Social Security, have a separate pension benefit and have access to government health insurance. Anyway, they collect more than twice the median household income in America, which is about $75,000.

Occasionally some journalist will make the argument that Congress should be paid more. I’ve done it twice, in 2013 and 2019, on the argument that it might attract more candidates devoted to making government work.

But those were in the halcyon days before Capitol Hill was only partly, not entirely, dysfunctional. I wouldn’t make the same argument today, when there’s reason to doubt that a higher wage would attract anyone better than the buffoons who walk the hallways of the House of Representatives at the moment.

Indeed, a higher wage might increase the psychological distance between our elected representatives and their constituents.

Just compare how eager they were in December 2017 to enact a huge tax cut for the wealthy, which passed a GOP-controlled Congress on the nod and was promptly signed by President Trump, with the dithering over the child tax credit, an immensely successful anti-poverty program that they allowed to expire at the beginning of 2022 and is just now back on the negotiating table, with no guarantee of restoration.

That tells you that the gulf between the lawmakers and the people they supposedly represent is already too wide.

As for the other argument, that paying them and the Supreme Court justices more would reduce their incentive to take bribes, just what sort of people are we electing and appointing to office?

How much more would we have to pay Clarence Thomas to get him to stop taking free yacht voyages and private flights to private clubs from rich “friends”? Sadly, to ask the question is to answer it.

Business

Data centers under scrutiny by California lawmakers as fears rise about health and energy impacts

IMPERIAL — Whenever the weather changes suddenly, or the skyline becomes shrouded in a windy haze, Fernanda Camarillo braces herself for an asthma attack.

Her condition has become more manageable, but the 27-year-old said it’s still scary when her chest tightens and she starts to wheeze. It was one of her first thoughts when she heard about plans to develop a massive data center next to her home in Imperial County, a farming community near the border of Mexico that struggles with poor air quality.

“A lot of people in the county are asthmatic,” she said, explaining that she worries the new center would add more pollution. “I’ve been anxious — so many of us are voicing our concerns.”

Data centers have existed for decades but are rapidly changing and expanding due to the worldwide boom in artificial intelligence, or AI as it’s known. States and communities nationwide have started pushing back, citing concerns that the projects could strain power grids, increase utility bills and have negative health and environmental impacts.

In California, state legislators are debating how to protect residents and natural resources without creating so much red tape that developers go elsewhere, taking their jobs and taxable earnings with them.

No Data Center signs are posted in the front yard of a home that is right behind the proposed site.

“We can be supportive of innovation and a technology that is needed but also protect our communities and our health and our environment,” said state Sen. Steve Padilla (D-San Diego). “We can do both at the same time.”

The California Legislature is considering bills to prohibit the projects from being exempted from the state’s stringent environmental law and to impose new tariffs on new major energy users that strain power supplies. Lawmakers also have proposed restrictions on new data centers, requiring companies to provide verifiable estimates on expected water and energy usage before they can be granted a business permit.

Imperial resident Fernanda Camarillo, who is an asthmatic, holds some of her medications.

Members of Congress also expressed concerns. Rep. Ro Khanna, speaking at a town hall about AI last month at Stanford University, said legislators must ensure data centers serve the communities that power them.

“We live in a new gilded age,” said Khanna (D-Fremont). “What kind of future are we going to build?”

::

Eric Masanet, a professor at UC Santa Barbara specializing in sustainability science for emerging technologies, described the facilities as the “brains” of the internet. The sprawling centers are filled with banks of specialized computers that process online shopping orders, stream movies, host websites, encode Zoom and other videoconferencing apps, store data and serve as switching stations for the digital world that’s now woven into daily life.

Data centers, particularly those that power AI, use significant amounts of water and energy. The facilities accounted for about 4.4% of the nation’s total electricity consumption in 2023, up from 1.9% in 2018, according to a report provided to Congress from the Lawrence Berkeley National Laboratory. The researchers projected that figure will reach 6.7% to 12% by 2028.

Many companies, including big tech giants like Meta, Google and Amazon, are making major investments in AI.

“We are building a lot more data centers faster than we ever did — and a new AI data center is 10 to 20, maybe 30 times, the size of the largest data centers we had before,” Masanet said.

The proposed site of the 950,000-square-foot data center is on a dusty parcel that is next to the Victoria Ranch housing community and adjacent to farmland in Imperial, Calif.

It’s unclear how many data centers are in the state. A California Energy Commission spokesperson told the Los Angeles Times it does not track this information. Data Center Map, a nongovernmental website that tracks data centers across the world, lists 289 facilities in California, with more than 4,000 nationwide.

The federal government has, so far, largely left it to states or localities to regulate data centers.

The facilities can generate significant revenue for local governments due to sales and property taxes.

But some new proposals are sparking a backlash. More than 200 community and environmental organizations, including a dozen from California, sent an open letter to Congress in December calling for a national moratorium on new data centers.

Robert Gould, a pathologist with San Francisco Bay Physicians for Social Responsibility, one of the organizations that signed the letter, explained data centers are causing a shift away from renewable energy and back toward fossil fuels because the facilities need a reliable and constant stream of power.

Cornell University researchers last year estimated that AI growth could add 24 to 44 million metric tons of carbon dioxide to the atmosphere annually by 2030, unless steps are taken to change course.

Gould said fossil fuel emissions are associated with various cancers, an increase in hospitalizations for older adults due to respiratory conditions, and asthma attacks or stunted lung growth in children. Particulate matter from fossil fuel emissions is also linked to cardiovascular events and negative effects on maternal fetal health.

Gould’s organization has noticed an alarming trend.

“These are generally placed in communities that are the least able to defend themselves,” he said.

Farmworkers toil in the noon heat to pick vegetables in Imperial. Agriculture is an important part of the Imperial Valley economy.

::

The debate over data centers is heating up in the Imperial Valley, a rural desert region in southeastern California where a proposed center faces fierce opposition from residents.

The county in 2025 granted the project an exemption for the California Environmental Quality Act, known as CEQA. The landmark 56-year-old state law has been credited with helping to preserve California’s natural beauty and protecting communities from hazardous impacts of construction projects — but also blamed for stymieing construction.

Imperial Valley Computer Manufacturing, a California-based limited liability company that started two years ago, plans to develop a 950,000-square-foot facility in the county that’s designed for advanced artificial intelligence and machine learning operations. The company says it will use reclaimed wastewater and EPA-certified natural gas generators, and create 2,500 to 3,500 construction jobs and 100 to 200 permanent positions.

“We are committed to Imperial County and to creating lasting economic opportunity,” the company website states. “The project will generate $28.75 million in annual property tax revenue for local schools, fire departments, libraries, and essential services.”

The Imperial County Board of Supervisors is moving toward finalizing the proposal.

Farmland spreads out in front of the Imperial Valley Fair near a proposed data center in Imperial.

Sebastian Rucci, an attorney and chief executive officer of Imperial Valley Computer Manufacturing, said he commissioned multiple studies assessing the proposed center’s potential effect on issues like traffic or the environment that found no or minimal harms. He threatened to pull his proposal if a CEQA review was required.

“CEQA leaves you in an unknown territory — some of the environmental groups have used it for extortion, they sue, they have no basis for the suit but they delay you, and then they can squeeze money out of you for settling the lawsuit,” said Rucci.

The exemption, however, has alarmed residents, who have spoken up at county board meetings and launched a community organization, Not in My Backyard Imperial, to protest the data center and demand a CEQA review.

“It feels like it’s us against the county,” said Camarillo, adding that many feel the board has dismissed their questions and concerns.

None of the Imperial County Board of Supervisors responded to requests for comment.

Resident Fernanda Camarillo’s home is right behind the proposed site of the data center in Imperial.

The center would be a neighbor to Camarillo’s house in Victoria Ranch, a family-friendly area with beige stucco homes topped with terracotta tile roofs. She worries about noise, pollution and spiking utility bills. Power companies that have to upgrade grids to meet data centers’ energy demands sometimes seek to recoup that cost by hiking up rates for all consumers.

Camarillo, a substitute teacher, is also scared for her students. The air quality in Imperial Valley is already so poor that schools use a system of color-coded flags to signal whether it’s safe for children to go outside during gym or recess, she said.

“I think they see [the valley] as easy pickings because we are a low-income community and we have such a large population of Latinos here,” Camarillo said.

A quick drive around the neighborhood shows others share her concerns. Signs protesting the data center pop up throughout the community, displayed on front lawns or nestled into rocky garden beds.

Victoria Ranch was quiet and peaceful on a sunny Sunday in late February. Francisco Leal, a resident and lead organizer for NIMBY Imperial, said that’s a major part of its appeal.

The colorful dusk sky hovers over a Little League baseball game at Freddie White Park in Imperial. The debate over data centers is heating up in the Imperial Valley, a rural desert region in southeastern California.

Leal wants answers about everything from potential health hazards and impacts on the local water supply to whether the fire department is equipped to handle a large-scale electrical blaze. But without a CEQA review, he says residents are left to trust assurances from the developer or privately hired consultants.

Leal plans to sell his property if the project goes forward, but the thought makes him emotional.

“It’s not just a house; it’s a home,” he said. “This is the only home my kids have ever known and all of our family memories are here.”

Gina Snow, another resident, isn’t necessarily against bringing a data center to the county. But she wants the proposal to undergo a CEQA review.

“Clearly we understand that there is economic development and the potential for that to be positive for the county, but at what cost?” she said.

Daniela Flores, executive director of Imperial Valley Equity and Justice, a nonprofit that works for social and environmental equality, stands on the site of the proposed data center.

::

Daniela Flores, executive director of Imperial Valley Equity and Justice, a nonprofit that works for social and environmental equality, said the community has good reason to be wary. Various industries have come into the region over the years and made grand promises that never panned out.

“We became a sacrifice zone,” she said, adding industries use the area’s resources while ultimately doing little to permanently improve the lives of most residents.

Flores said the community continues to struggle with a range of problems, including poor air quality, high poverty rates, weak worker protections and crumbling infrastructure. She believes a data center could add new and potentially dangerous challenges.

The valley has long, brutal summers with temperatures that swell to 120 degrees. If the data center strains the grid and causes a lengthy blackout, or low-income residents have their power shut off because they can’t afford the rising bills, Flores fears the situation could quickly turn deadly.

The city of Imperial also has concerns. The city has filed a lawsuit calling on the county to halt the project, arguing it should not have received a CEQA exemption.

The controversy has drawn attention from Padilla, whose district includes Imperial Valley. Padilla has echoed residents’ calls for more transparency from the county and introduced Senate Bill 887, which would ban data centers from receiving exemptions from CEQA.

“I am not anti-data center or anti-artificial intelligence,” Padilla said. But, he added, we need to “find a way to do this right and make sure there is adequate review and understanding.”

A dusty haze settles over the city of Imperial at dusk near the site of a proposed data center.

Another measure from Padilla, Senate Bill 886, would direct the Public Utilities Commission to create an electrical corporation tariff to cover the cost of data center-related grid upgrades.

Other related legislation this year includes Assembly Bill 2619 from Assemblymember Diane Papan (D-San Mateo) that would require data center owners to provide an estimate about expected water usage and sources before applying for a business license, and Assembly Bill 1577, by Assemblymember Rebecca Bauer-Kahan (D-Orinda), which would require data center owners to submit monthly information to a state commission about water and fuel consumption and energy efficiency.

While lawmakers weigh new policies at the statehouse, Camarillo said she hopes the priority will be protecting communities.

“Innovation is important, but innovation for the sake of innovation has never really been something that hasn’t had negative impacts,” she said. “Think about human lives.”

Business

Which Countries Depend the Most on Persian Gulf Oil and Gas

The war in the Middle East has halted most of the oil and gas trade from the region, forcing countries thousands of miles to contend with their energy supplies suddenly vanishing.

The Persian Gulf accounts for roughly a fifth of the world’s energy needs. As Iran effectively blocks shipments, international prices for oil and gas have shot up. That in turn has meant gasoline, jet fuel and other products have become costlier — hurting drivers, business owners and others from Los Angeles to Lahore, Pakistan. As the world becomes gripped by the energy crisis, some nations are feeling the loss more acutely.

Asian countries are the biggest buyers of Persian Gulf energy

Pakistan

Total energy imports in 2024

$17 bil. Japan

Total energy imports in 2024

$139 bil. Thailand

Total energy imports in 2024 $43 bil. South Korea

Total energy imports in 2024

$144 bil. India

Total energy imports in 2024

$180 bil. Maldives

Total energy imports in 2024

$774.1 mil. Taiwan

Total energy imports in 2024

$47 bil. China

Total energy imports in 2024 $413 bil. Sri Lanka

Total energy imports in 2024

$4 bil. Malaysia

Total energy imports in 2024

$44 bil. Singapore

Total energy imports in 2024

$86 bil. Philippines

Total energy imports in 2024

$16 bil. Israel

Total energy imports in 2024 $3 bil. Brunei

Total energy imports in 2024

$5 bil. Myanmar

Total energy imports in 2024

$5 bil. Indonesia

Total energy imports in 2024

$35 bil. Armenia

Total energy imports in 2024

$535.9 mil. Turkey

Total energy imports in 2024 $26 bil. Hong Kong

Total energy imports in 2024

$12 bil. Uzbekistan

Total energy imports in 2024

$2 bil. Kazakhstan

Total energy imports in 2024

$628 mil. Yemen

Total energy imports in 2024

$23.5 mil. Azerbaijan

Total energy imports in 2024 $2 bil. Kyrgyzstan

Total energy imports in 2024

$1 bil. Jordan

Total energy imports in 2024

$641 mil. Cambodia

Total energy imports in 2024

$3 bil. Syria

Total energy imports in 2024

$131.2 mil. Bangladesh

Total energy imports in 2024 $7 bil.

In 2024, nearly 21 million barrels of oil a day crossed through the Strait of Hormuz, the narrow passageway connecting the Persian Gulf to the world. Four-fifths of that supply went to Asia.

China has long been the biggest purchaser of oil and gas from Persian Gulf nations. And with more than a third of its total supply coming from the region, the disruption is significant for Beijing. But other countries are almost entirely reliant on the region for their energy needs.

Pakistan has considered imposing a four-day workweek, and remote school and work, in order to preserve energy stockpiles. A state-led fund in Thailand, to subsidize the cost of fuel when prices surge, plunged into a deficit this month.

In India, where the economy depends on the Middle East for roughly 40 percent of the country’s oil imports and 80 percent of its gas, a shortage of cooking gas is squeezing households. And across Asia, fliers are being stranded because airlines running low on jet fuel have canceled thousands of flights.

Europe has been more insulated, sort of

Greece

Total energy imports in 2024

$19 bil. Lithuania

Total energy imports in 2024

$7 bil. Poland

Total energy imports in 2024

$28 bil. Serbia

Total energy imports in 2024 $2 bil. Bulgaria

Total energy imports in 2024

$5 bil. Slovenia

Total energy imports in 2024

$4 bil. Italy

Total energy imports in 2024

$50 bil. Albania

Total energy imports in 2024

$931.9 mil. France

Total energy imports in 2024 $73 bil. Ireland

Total energy imports in 2024

$6 bil. Iceland

Total energy imports in 2024

$1 bil. U.K.

Total energy imports in 2024

$62 bil. Netherlands

Total energy imports in 2024

$105 bil. Spain

Total energy imports in 2024 $53 bil. Romania

Total energy imports in 2024

$8 bil. Denmark

Total energy imports in 2024

$6 bil. Ukraine

Total energy imports in 2024

$8 bil. Austria

Total energy imports in 2024

$10 bil. Germany

Total energy imports in 2024 $66 bil. Norway

Total energy imports in 2024

$5 bil. Portugal

Total energy imports in 2024

$10 bil. Moldova

Total energy imports in 2024

$1 bil. Cyprus

Total energy imports in 2024

$3 bil. Belgium

Total energy imports in 2024 $47 bil. Latvia

Total energy imports in 2024

$2 bil. Sweden

Total energy imports in 2024

$18 bil. Finland

Total energy imports in 2024

$10 bil. Estonia

Total energy imports in 2024

$1 bil. North Macedonia

Total energy imports in 2024 $902.7 mil. Croatia

Total energy imports in 2024

$6 bil. Switzerland

Total energy imports in 2024

$8 bil. Bosnia and Herzegovina

Total energy imports in 2024

$1 bil. Slovakia

Total energy imports in 2024

$4 bil.

Europe has traditionally been less reliant on the Gulf than Asia has been. It used to get most of its natural gas from Russia, but in recent years it has relied more on the United States and Norway. But the continent has had to endure one energy crisis after another in recent years, including from Russia’s war with Ukraine and the Western sanctions that followed.

Russia is the world’s third-largest producer of oil and second-largest producer of gas, and the sales of its energy products have been significantly restricted while Moscow continues its invasion of Ukraine.

This current crisis comes as European countries, confronting lackluster economic output, try to rebuild their industrial bases and fend off competition from cheaper Chinese exports.

Confronted with soaring prices since its attack with Israel on Iran, the United States temporarily lifted sanctions on Russian oil that is currently at sea, hoping to ease the global supply and markets in the process. The European Union has not made similar moves.

Parts of Africa will be hit hard

Seychelles

Total energy imports in 2024

$308.6 mil. Mauritania

Total energy imports in 2024

$973.5 mil. Uganda

Total energy imports in 2024

$2 bil. Mauritius

Total energy imports in 2024

$1 bil. Kenya

Total energy imports in 2024 $5 bil. Egypt

Total energy imports in 2024

$16 bil. Zambia

Total energy imports in 2024

$2 bil. Namibia

Total energy imports in 2024

$1 bil. Malawi

Total energy imports in 2024

$476.1 mil. South Africa

Total energy imports in 2024 $18 bil. Tanzania

Total energy imports in 2024

$5 bil. Morocco

Total energy imports in 2024

$8 bil. Mozambique

Total energy imports in 2024

$2 bil. Madagascar

Total energy imports in 2024

$841.3 mil. Zimbabwe

Total energy imports in 2024 $2 bil. Senegal

Total energy imports in 2024

$4 bil. Nigeria

Total energy imports in 2024

$13 bil. Benin

Total energy imports in 2024

$398.4 mil. Angola

Total energy imports in 2024

$2 bil. Burkina Faso

Total energy imports in 2024 $2 bil. Tunisia

Total energy imports in 2024

$3 bil. Cote d’Ivoire

Total energy imports in 2024

$4 bil. Central African Republic

Total energy imports in 2024

$196.7 mil. Gambia

Total energy imports in 2024

$206.6 mil. Niger

Total energy imports in 2024 $113.6 mil. Lesotho

Total energy imports in 2024

$214.4 mil. Cameroon

Total energy imports in 2024

$424.4 mil. Libya

Total energy imports in 2024

$4 bil.

African nations, like many other countries in the global south, could feel the disruption unevenly. Seychelles, the island nation off the east coast of Africa, imported almost all of its energy from Gulf states in 2024. Mauritius has had a similar reliance, while Nigeria, an oil-rich state and a member of the OPEC Plus oil cartel, has traditionally imported relatively few fossil fuels from the Middle East.

But as the war continues, the impact is being felt beyond the imports of oil and gas. The Persian Gulf is a dominant source of fertilizer, partly because the region’s abundance of energy has spurred the development of factories that make the raw materials for many types of agricultural chemicals.

A sustained rise in the cost of fertilizer could force governments in South Asia and sub-Saharan Africa to subsidize the cost of growing crops or otherwise watch food prices climb. That could add to debt burdens afflicting many lower-income countries.

The Americas and elsewhere are feeling broader economic shocks

Argentina

Total energy imports in 2024

$3 bil. Brazil

Total energy imports in 2024

$28 bil. United States

Total energy imports in 2024

$233 bil. Paraguay

Total energy imports in 2024

$2 bil. Canada

Total energy imports in 2024 $31 bil. Uruguay

Total energy imports in 2024

$1 bil. Australia

Total energy imports in 2024

$37 bil. Dominican Republic

Total energy imports in 2024

$5 bil. Guatemala

Total energy imports in 2024

$4 bil. Chile

Total energy imports in 2024 $13 bil. Fiji

Total energy imports in 2024

$888.1 mil. Peru

Total energy imports in 2024

$9 bil. Honduras

Total energy imports in 2024

$2 bil. Ecuador

Total energy imports in 2024

$5 bil. Colombia

Total energy imports in 2024 $6 bil. El Salvador

Total energy imports in 2024

$2 bil. Costa Rica

Total energy imports in 2024

$2 bil. New Zealand

Total energy imports in 2024

$6 bil. Mexico

Total energy imports in 2024

$34 bil. Belize

Total energy imports in 2024 $235.5 mil. Bolivia

Total energy imports in 2024

$2 bil. Nicaragua

Total energy imports in 2024

$1 bil. Barbados

Total energy imports in 2024

$552.3 mil.

The United States is the world’s largest producer of oil and gas. That means the impact of halting the energy trade from the Middle East is much less severe.

But the United States and other countries in the region that do not import great quantities from the Gulf are still feeling economic strain. The jump in oil prices – to over $100 a barrel in recent weeks – has already weighed on other major economic factors.

The cost of gasoline has jumped by about a dollar a gallon nationally since the war began. American airlines have begun to cut flights because of fuel costs. Concerns about inflation have pushed mortgage rates to their highest level in three months, just weeks after they fell below 6 percent for the first time since 2022.

If the war drags on, or if oil and gas prices continue to rise, the damage will most likely grow, economists say. It is perhaps one reason why the White House has forcefully insisted that it does not need Middle Eastern oil — and is increasingly trying to use military force to stop Iran’s blockade of it.

Methodology

To calculate total energy imports for each country, The New York Times used 2024 international trade data from the Observatory for Economic Complexity and tallied the value of imports for a subset of energy-related goods. A share of imports from Gulf countries was then calculated from that subset.

The Gulf countries included are: Kuwait, Iraq, Bahrain, Qatar, the United Arab Emirates, Saudi Arabia and Iran.

The categories used were: crude petroleum oils (HS 270900), bituminous petroleum distillates (HS 271000), liquefied natural gas (HS 271111), liquefied propane (HS 271112), liquefied butanes (HS 271113) and liquefied petroleum gases (HS 271119).

Business

As malls and department stores fade, California’s Ross and other discounters are booming

As big malls and department stores close, bargain chains like Ross Dress for Less are rolling out new stores.

Economic anxiety and inflation are leading shoppers to spend less and search for savings. In this bombed-out retail landscape, some chains are thriving and opening new outlets.

At a new Ross in Alhambra, Liz Lopez was shopping for a designer purse. She is a big fan of the Dublin-based chain and thrilled to now have one just 10 blocks from her home.

People check out after shopping at a newly opened Ross store.

(Jason Armond / Los Angeles Times)

“I come on Tuesdays for the senior discounts,” Lopez said, showing off her new black Dolce & Gabbana purse. “I always find good deals.”

The new store on East Valley Boulevard opened this month. One of its sister shops — dd’s Discounts, which is owned by the same parent company — opened in North Hollywood.

This year, the parent company, Ross Stores Inc., plans to open 110 new outlets across the country, after 90 last year.

Ross Chief Executive Jim Conroy said Ross is capturing market share by attracting customers away from other retail chains.

“The share shift is more from mainstream retail, department stores and other places like that,” he told analysts after announcing strong growth early this month.

Other discount outlets, including T.J. Maxx, Dollar General, Nordstrom Rack and Five Below, are also expanding to capitalize on tough times.

Retail data show shoppers are visiting a broader spectrum of destinations to find lower prices, said Placer.ai, which tracks people’s movements based on cellphone usage.

“Consumers have become increasingly selective and price-sensitive, actively pivoting away from traditional mid-market chains in favor of discount retailers and value-oriented brands,” Placer.ai said in a report this month. “Because affordability remains a core focus, average households are spreading their visits across a wider number of non-discretionary stores to hunt for deals.”

Discount retailers have been popular for decades, but a combination of factors is now driving accelerated growth for some, experts said.

Dollar stores and the first off-price retailers rose to popularity in the 1990s, but really took off around 2010 following the recession, according to Dylan Carden, a specialty retail analyst at William Blair.

Since then, the stigma surrounding bargain stores has lessened for both customers and brands.

“They’re phenomenal at what they do,” Carden said of the major off-price retailers, including Ross and TJX, which owns T.J. Maxx, Marshalls and Home Goods.

In the last year or so, well-established retailers that were already grappling with intense competition from online retailers have been hit as their customers cut back on discretionary spending amid inflation, tariffs and global conflict.

Savings signs on the walls at a newly opened Ross store in Alhambra.

(Jason Armond / Los Angeles Times)

For stores such as Ross, this dip in demand at department stores means a larger supply of discounted products, as they often buy unsold merchandise from struggling high-end outlets and manufacturers.

“These companies offer a tremendous value to shoppers, but they perhaps offer an even greater value to the brands,” said Simeon Siegel, a senior managing director at Guggenheim Partners. “They’ve solidified their role in the retail ecosystem.”

Five Below, the Pennsylvania-based discount outlet aimed at teens and tweens, opened 150 new stores in 2025 and has plans to open more this year. Its same-store sales rose 15% in the fourth quarter last year.

Ross sells everything from neckties to shower curtains. Its fourth-quarter profits last year rose 10% from the year prior. Ross reported record sales for 2025 of $22.8 billion, up 8% from the year prior. Its net income was $2.1 billion, similar to 2024, while comparable store sales grew 5%.

Investors have been happy with its outperformance.

Ross shares surged around 70% over the past year. TJX shares rose around 30%.

A man exits after shopping at a newly opened Ross store.

(Jason Armond / Los Angeles Times)

TJX has also seen year-over-year increases in sales and net income, according to its most recent earnings release. It plans to open 146 new stores this year.

“The revenues, the stores, the businesses are doing excellent,” Siegel said. “They are absolutely in their stride.”

In contrast, some department stores are struggling.

Macy’s closed two California locations earlier this year as part of its plan to reduce its footprint by 30% by 2027. Twelve more closures are planned in the coming months across the U.S.

Saks Global, which owns Saks Fifth Avenue and Neiman Marcus, filed for Chapter 11 bankruptcy protection in January, citing overwhelming debt.

“The department store pressure and the off-price success are not coincidental,” Siegel said. “They are clearly linked. Off-price has effectively become the new department store.”

In addition to opening new stores, Ross is working to streamline the shopping process by better organizing its stores and adding self-checkout at more branches.

The new Ross in Alhambra has several self-checkout lanes and well-stocked aisles organized into categories such as apparel, technology and cosmetics.

Lopez, a regular at Ross Dress for Less, put a pack of clothing hangers in her cart along with her new purse before checking out.

“I always seem to find what I need,” she said.

-

Detroit, MI6 days ago

Detroit, MI6 days agoDrummer Brian Pastoria, longtime Detroit music advocate, dies at 68

-

Oklahoma1 week ago

Oklahoma1 week agoFamily rallies around Oklahoma father after head-on crash

-

Georgia1 week ago

Georgia1 week agoHow ICE plans for a detention warehouse pushed a Georgia town to fight back | CNN Politics

-

Alaska1 week ago

Alaska1 week agoPolice looking for man considered ‘armed and dangerous’

-

Movie Reviews5 days ago

Movie Reviews5 days ago‘Youth’ Twitter review: Ken Karunaas impresses audiences; Suraj Venjaramoodu adds charm; music wins praise | – The Times of India

-

Education1 week ago

Education1 week agoVideo: Turning Point USA Clubs Expand to High Schools Across America

-

Science1 week ago

Science1 week agoLong COVID leaves thousands of L.A. county residents sick, broke and ignored

-

Sports3 days ago

Sports3 days agoIOC addresses execution of 19-year-old Iranian wrestler Saleh Mohammadi