Business

L.A. fire victims say state regulators ignored complaints about State Farm

Last spring, victims of the Los Angeles wildfires complained loudly and en masse over how State Farm General was handling their insurance claims, especially for smoke damage.

Insurance Commissioner Ricardo Lara urged them to lodge formal complaints with the department.

“That’s how we track and how we monitor, and we make sure that we follow through … make sure that those claims are being addressed,” he told several hundred fire victims in a Zoom forum in May.

Nearly a year later, however, many homeowners and their representatives say the promise was hollow. They voice mounting frustration over how the California Department of Insurance investigated their complaints about State Farm.

More than a dozen homeowners and their representatives told The Times that the department did little to resolve a wide range of complaints, or prevent new problems, in State Farm’s handling of their claims.

“Seventy percent of insured Eaton and Palisades fire survivors are facing delays and denials that are impeding their recovery,” said Joy Chen, executive director of the Eaton Fire Survivors Network, citing a survey by the nonprofit Department of Angels. “That is evidence of the failure of this department to do its job.”

Policyholders shared complaints lodged against State Farm over denials to pay for the cleanup of fire toxins, rebuild estimates well below actual construction costs and delayed checks for living expenses. To the state they cited frequent turnover in adjusters and demands to sign legal papers agreeing to forego future reimbursement for personal items without itemized receipts.

Now, they said, State Farm is cutting off prepaid rentals and leases for fire victims who aren’t close to returning home.

Most of the fire victims said they were left in the dark about their cases, and were told to stop trying to communicate with their complaint handlers. Some said their cases were closed before their insurance disputes were settled.

“It doesn’t feel like it’s an actual, legitimate organization that’s meant to protect consumers,” said Len Kendall, who lost his home to the Pacific Palisades fire.

Kendall initially complained to the state about State Farm in July, citing delays in handling his total loss claim, dealing with multiple adjusters and struggles to get reimbursed for living expenses. Later he said he was told stop communicating with the state and to send his records “directly and solely” to State Farm.

“We’re told that they’re tracking information and speaking to the insurers, but we have no idea what is happening,” Kendall said. “ When it comes to the [insurance department], we’re all totally in the dark.”

A spokesperson for State Farm declined to address complaints from L.A. fire victims.

A representative for the state insurance department declined to comment on its handling of complaints against State Farm.

The agency did say it had “recovered” more than $210 million for fire victims “through its intervention and aggressive advocacy on these complaints.”

“We do our best to approach every wildfire survivor with empathy and understanding,” Michael Soller, spokesman for the insurance department, said late Wednesday. “Our goal is helping people recover fully, fairly, and quickly. We hold ourselves to the highest standards.”

He encouraged those with insurance disputes to contact the department. “We will do our best to expedite their claims,” he said.

The mistrust between fire victims and the department has been deepened by newly released records showing the department disciplined one its senior complaint handlers after she criticized State Farm over its claims handling, according to personnel records reviewed by The Times.

In a July letter to a State Farm case manager, Coleen Vandepas — a 32-year-veteran of the department who had previously been commended for her work on behalf of policyholders — accused the insurer of “shoddy” and “shameful” handling of an L.A. fire claim, including claiming it did not have test results within the insurer’s possession. She demanded the company apologize to its policyholder. In another policyholder’s case, she said State Farm engaged in a “pattern and practice” of delay.

Records show that days later, a State Farm lawyer called a top-level executive at the insurance department to complain about Vandepas’ statements.

Vandepas’ State Farm caseload was subsequently reassigned and she was docked 10% of her pay, according to personnel records. Her supervisors said Vandepas had made “accusatory” and “improper” remarks about State Farm, and cited a LinkedIn post State Farm had called attention to, in which she characterized insurance company threats to leave California as “wailing” by companies that wanted to “make huge amounts off the backs of the citizens of California.”

A state personnel board law judge reviewing the discipline called Vandepas’ remarks “rude and disparaging” and the full board this month rejected her appeal. A new appeal has been filed with the California Public Employee Relations Board, noting Vandepas was also protected as a union steward and was in part punished for raising internal workload issues.

The workplace action has angered advocates for wildfire victims.

“This sends a message to every single person who works at [the California Department of Insurance]: ‘You may be next,” said Chen, a former deputy mayor of Los Angeles.

Through its corporate media office in Illinois, State Farm declined to comment on the sanctions against Vandepas.

“We are not a party to the case in question,” the Illinois-based insurer said in a statement. “We have ongoing relationships with state regulators so we can best meet the needs of our customers.”

Investigations into State Farm

State Farm was in the midst of dropping some 72,000 policies in California, and seeking a $1.3-billion rate hike, when the Jan. 7, 2025, firestorm ravaged Los Angeles. The disaster killed 31, destroyed more than 16,000 structures, and left many others unable to return to their homes. As of November, the insurance department reported more than 42,000 home and commercial insurance claims.

By far, the largest share of those claims are with State Farm General, the California subsidiary of State Farm Mutual. A survey of about 2,300 five victims by the Department of Angels noted State Farm policyholders reported higher rates of claim denials, low estimates and other complaints than customers of other insurers.

Los Angeles County in November opened its own investigation into State Farm’s claims handling, demanding the insurer turn over reams of information, including company policy guides, training materials for handling fire and smoke claims, among other documents.

In June, Lara launched what he called an expedited market conduct exam of State Farm. The findings have yet to be released.

Lara rejected pressure from wildfire victim advocates to delay an interim 17% emergency hike until State Farm’s claims practices could be examined. He said they would be taken up in the full rate review. There has been no public hearings on the full hike. The case could be settled by the end of the month, state lawyers told a judge this week.

The insurance giant has a history of pushing strongly against regulators.

The company has refused to provide financial records sought by California actuaries attempting to judge the merit of its pending rate hike, including plans to drop another 11,000 policies, according to public rate filing records obtained by The Times.

The insurance department tracks complaints by disaster, as well as by insurer, but has rejected public record requests for that data. Its consumer complaint group has just 34 employees and hasn’t changed staffing levels despite the surge in wildfire claims in 2025, according to California payroll records.

Internal agency emails show a State Farm executive in May 2025 told Lara the insurer had received less than 310 policyholder complaints among 10,359 Los Angeles fire claims at the time. (Most of the cases reviewed by The Times were filed later.)

“SFG is not an outlier with respect to the number of complaints received in relation to the number of claims from the January 2025 wildfires,” State Farm General CEO Dan Krause wrote to Lara.

Insurance companies have 21 days to respond when a complaint is filed, and then state compliance officers can review the record for adherence with insurance law. They cannot make a determination of fault, or the size of an award. In a process kept confidential, they can challenge insurers with questions, asking them to explain their decisions. If they see violations, they cannot take action against an insurer. And they cannot tell the policyholder.

The insurance department contends the complaint process has resulted in the reversal of claim denials, increased payouts and agreements in individual cases to test for the toxic residues of wildfire smoke.

But interviews and records reviewed by The Times revealed inconsistencies in how wildfire disaster complaints were handled.

Some compliance officers told policyholders to stop sharing correspondence with their insurance companies or adjusters, saying they would read the claim files for themselves. Policyholders frustrated by the silence sought to file new complaints or have their cases reassigned, only to be refused.

After five months of sending protests about a “non-responsive” compliance officer, one fire victim was told by a bureau supervisor that she had two other alternatives to resolve her insurance dispute: seek a lawyer or file a lawsuit.

Three officers attempted to close policyholder cases even though the insurance claim remained in dispute. In one instance, a compliance officer referenced the wrong insurance company and the wrong issue being contested, letters shared with The Times show.

Andrew Wessels said State Farm prematurely closed this case after he challenged the insurers initial refusal to address toxic residues in his house left standing among the rubble of the Eaton fire, or its failure to pay living expenses.

For months, Wessels repeatedly wrote to alert his compliance officer that State Farm was making false claims. The state reviewer wrote back once to acknowledge receipt of further complaints he would add to the case file. Then in October the case officer tried to close the still-disputed State Farm claim, calling it “in stable condition.”

“The Department would find its task of regulating the insurance industry much more difficult without the help of consumers like you,” the closure letter said.

Wessels protested and his case was reopened. He continues to wrestle with State Farm over safety tests, delayed living expenses and ever-changing adjusters. He emails updates to his state insurance compliance officer.

“I just periodically send an email into oblivion, basically,” he said.

Business

How the S&P 500 Stock Index Became So Skewed to Tech and A.I.

Nvidia, the chipmaker that became the world’s most valuable public company two years ago, was alone worth more than $4.75 trillion as of Thursday morning. Its value, or market capitalization, is more than double the combined worth of all the companies in the energy sector, including oil giants like Exxon Mobil and Chevron.

The chipmaker’s market cap has swelled so much recently, it is now 20 percent greater than the sum of all of the companies in the materials, utilities and real estate sectors combined.

What unifies these giant tech companies is artificial intelligence. Nvidia makes the hardware that powers it; Microsoft, Apple and others have been making big bets on products that people can use in their everyday lives.

But as worries grow over lavish spending on A.I., as well as the technology’s potential to disrupt large swaths of the economy, the outsize influence that these companies exert over markets has raised alarms. They can mask underlying risks in other parts of the index. And if a handful of these giants falter, it could mean widespread damage to investors’ portfolios and retirement funds in ways that could ripple more broadly across the economy.

The dynamic has drawn comparisons to past crises, notably the dot-com bubble. Tech companies also made up a large share of the stock index then — though not as much as today, and many were not nearly as profitable, if they made money at all.

How the current moment compares with past pre-crisis moments

To understand how abnormal and worrisome this moment might be, The New York Times analyzed data from S&P Dow Jones Indices that compiled the market values of the companies in the S&P 500 in December 1999 and August 2007. Each date was chosen roughly three months before a downturn to capture the weighted breakdown of the index before crises fully took hold and values fell.

The companies that make up the index have periodically cycled in and out, and the sectors were reclassified over the last two decades. But even after factoring in those changes, the picture that emerges is a market that is becoming increasingly one-sided.

In December 1999, the tech sector made up 26 percent of the total.

In August 2007, just before the Great Recession, it was only 14 percent.

Today, tech is worth a third of the market, as other vital sectors, such as energy and those that include manufacturing, have shrunk.

Since then, the huge growth of the internet, social media and other technologies propelled the economy.

Now, never has so much of the market been concentrated in so few companies. The top 10 make up almost 40 percent of the S&P 500.

How much of the S&P 500 is occupied by the top 10 companies

With greater concentration of wealth comes greater risk. When so much money has accumulated in just a handful of companies, stock trading can be more volatile and susceptible to large swings. One day after Nvidia posted a huge profit for its most recent quarter, its stock price paradoxically fell by 5.5 percent. So far in 2026, more than a fifth of the stocks in the S&P 500 have moved by 20 percent or more. Companies and industries that are seen as particularly prone to disruption by A.I. have been hard hit.

The volatility can be compounded as everyone reorients their businesses around A.I, or in response to it.

The artificial intelligence boom has touched every corner of the economy. As data centers proliferate to support massive computation, the utilities sector has seen huge growth, fueled by the energy demands of the grid. In 2025, companies like NextEra and Exelon saw their valuations surge.

The industrials sector, too, has undergone a notable shift. General Electric was its undisputed heavyweight in 1999 and 2007, but the recent explosion in data center construction has evened out growth in the sector. GE still leads today, but Caterpillar is a very close second. Caterpillar, which is often associated with construction, has seen a spike in sales of its turbines and power-generation equipment, which are used in data centers.

One large difference between the big tech companies now and their counterparts during the dot-com boom is that many now earn money. A lot of the well-known names in the late 1990s, including Pets.com, had soaring valuations and little revenue, which meant that when the bubble popped, many companies quickly collapsed.

Nvidia, Apple, Alphabet and others generate hundreds of billions of dollars in revenue each year.

And many of the biggest players in artificial intelligence these days are private companies. OpenAI, Anthropic and SpaceX are expected to go public later this year, which could further tilt the market dynamic toward tech and A.I.

Methodology

Sector values reflect the GICS code classification system of companies in the S&P 500. As changes to the GICS system took place from 1999 to now, The New York Times reclassified all companies in the index in 1999 and 2007 with current sector values. All monetary figures from 1999 and 2007 have been adjusted for inflation.

Business

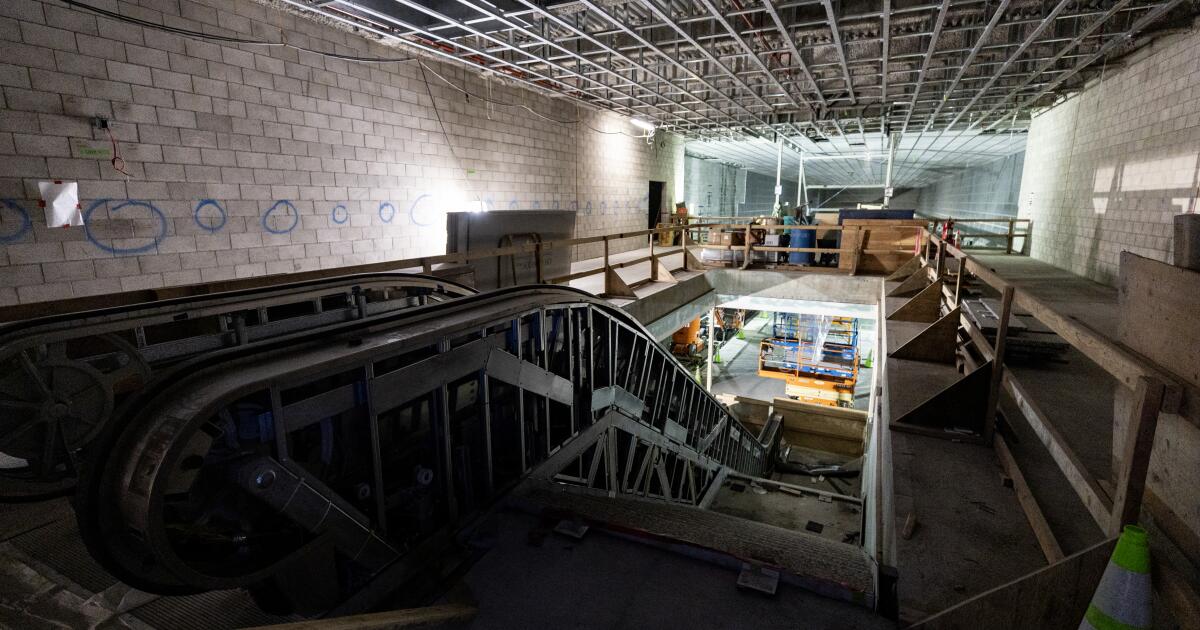

Coming soon: L.A. Metro stops that connect downtown to Beverly Hills, Miracle Mile

Metro has announced it will open three new stations connecting downtown Los Angeles to Beverly Hills in May.

The new stations mark the first phase of a rail extension project on the Metro D line, also known as the Purple Line, beneath Wilshire Boulevard. The extension will open to the public on May 8.

It’s part of a broader plan to enhance the region’s transit infrastructure in time for the 2028 Olympic and Paralympic Games.

The new stations will take riders west, past the existing Wilshire/Western station in Koreatown, and stopping along the Miracle Mile before arriving at Beverly Hills. The 3.92-mile addition winds through Hancock Park, Windsor Square, the Fairfax District and Carthay Circle. The stations will be located at Wilshire/La Brea, Wilshire/Fairfax and Wilshire/La Cienega.

This is the first of three phases in the D Line extension project. The completion of the this phase, budgeted at $3.7 billion, comes months later than earlier projections. Metro said in 2025 it expected to wrap up the phase by the end of the year.

The route between downtown Los Angeles and Koreatown is one of Metro’s most heavily used rail lines, with an average of around 65,000 daily boardings. The Purple Line extension project — with the goal of adding seven stations and expanding service on the line to Hancock Park, Century City, Beverly Hills and Westwood — broke ground more than a decade ago. Metro’s goal is to finish by the 2028 Summer Olympics.

In a news release on Thursday, Metro described its D Line expansion as “one of the highest-priority” transit projects in its portfolio and “a historic milestone.”

“Traveling through Mid-Wilshire to experience the culture, cuisine and commerce across diverse neighborhoods will be easier, faster and more accessible,” said Fernando Dutra, Metro board chair and Whittier City Council member, in the release. “That connectivity from Downtown LA to the westside will serve as a lasting legacy for all Angelenos.”

The D line was closed for more than two months last year for construction under Wilshire Boulevard, contributing to a 13.5% drop in ridership that was exacerbated by immigration raids in the area.

“I can’t wait for everyone to enjoy and discover the vibrance of mid-Wilshire without the traffic,” Metro CEO Stephanie Wiggins said in a statement.

Business

Commentary: AI isn’t ready to be your doctor yet — but will it ever be?

As almost everybody knows, the AI gold rush is upon us. And in few fields is it happening as fast and furiously as in healthcare.

That points to an important corollary: Beware.

Artificial intelligence technology has helped radiologists identify anomalies in images that human users have missed. It has some evident benefits in relieving doctors of the back-office routines that consume hours better spent treating patients, such as filing insurance claims and scheduling appointments.

Eventually, a lot of this stuff is going to be great, but we’re not there yet.

— Eric Topol, Scripps Research

But it has also been accused of providing erroneous information to surgeons during operations that placed their patients at grave risk of injury, and fomenting panic among users who take its offhand responses as serious diagnoses.

The commercial direct-to-consumer applications being promoted by AI firms, such as OpenAI’s ChatGPT Health and Anthropic’s Claude for Healthcare — both of which were introduced in January — raise special concerns among medical professionals. That’s because they’ve been pitched to users who may not appreciate their tendency to output erroneous information errors and offer inappropriate advice.

“Eventually, a lot of this stuff is going to be great, but we’re not there yet,” says Eric Topol, a cardiologist associated with Scripps Research Institute in La Jolla.

“The fact that they’re putting these out without enough anchoring in safety and quality and consistency concerns me,” Topol says. “They need much tighter testing. The problem I have is that these efforts are largely stemming from commercial interests — there’s furious competition to be the first to come out with an app for patients, even if it’s not quite ready yet.”

That was the experience reported by Washington Post technology columnist Geoffrey A. Fowler, who provided ChatGPT with 10 years of health data compiled by his Apple Watch — and received a warning about his cardiac health so dire that it sent him to his cardiologist, who told him he was in the bloom of health.

Fowler also sought out Topol, who reviewed the data and found the Chatbot’s warning to be “baseless.” Anthropic’s chatbot also provided Fowler with a health grade that Topol deemed dubious.

“Claude is designed to help users understand and organize their health information, framing responses as general health information rather than medical advice,” an Anthropic spokesman told me by email. “It can provide clinical context—for example, explaining how a lab value compares to diagnostic thresholds—while clearly stating that formal diagnosis requires professional evaluation.”

OpenAI didn’t respond to my questions about the safety and reliability of its consumer app.

Topol, who has written extensively about advanced technology in medicine, is nothing like an AI skeptic. He calls himself an AI optimist, citing numerous studies showing that artificial intelligence can help doctors treat patients more effectively and even to improve their bedside manners.

But he cautions that “healthcare can’t tolerate significant errors. We have to minimize the errors, the hallucinations, the confabulations, the BS and the sycophancy” that AI technology commonly displays.

In medicine, as in many other fields, AI looks to have been oversold as a labor-saving technology. According to a study of AI-equipped stethoscopes provided to about 100 British medical groups published earlier this month in the Lancet, the British medical journal, the high-tech stethoscopes effectively identified some (but not all) indications of heart failure better than conventional stethoscopes. But 40% of the groups abandoned the new devices during the 12-month period of the study.

The main complaint was the “additional workflow burden” experienced by the users — an indication that whatever the virtues of the new technology, they didn’t outweigh the time and effort needed to use them.

Other studies have found that AI can augment physicians’ skills — when the doctors have learned to trust their AI tools and when they’re used in relatively uncomplicated, even generic, conditions.

The most notable benefits have been found in radiology; according to a Dutch study published last year, radiologists using AI to help interpret breast X-rays did as well in finding cancers as two radiologists working together. That suggested that judicious use of AI could free up time for one of the two radiologists. But in this case as in others, the AI helper didn’t do consistently well.

“AI misses some breast cancers that are recalled by human assessment,” a study author said, “but detects a similar number of breast cancers otherwise missed by the interpreting radiologists.”

AI’s incursion into healthcare even has become something of a cultural touchstone: In HBO’s up-to-the-minute emergency room series “The Pitt,” beleaguered ER doctors discover that an AI app pushed on them as a time-saving charting tool has “hallucinated” a history of appendicitis for a patient, endangering the patient’s treatment.

“Generative AI is not perfect,” the app’s sponsor responds. “We still need to proofread every chart it creates” — thus acknowledging, accurately, that AI can increase, not relieve, users’ workloads.

A future in which robots perform surgical operations or make accurate diagnoses remains the stuff of science fiction. In medicine, as elsewhere, AI technology has been shown to be useful to take over automatable tasks from humans, but not in situations requiring human ingenuity or creativity — or precision. And attempts to use AI-related algorithms to make healthcare judgments have been challenged in court.

In a class-action lawsuit filed in Minnesota federal court in 2023, five Medicare patients and survivors of three others allege that UnitedHealth Group, the nation’s largest medical insurer, relied on an AI algorithm to deny coverage for their care, “overriding their treating physicians’ determinations as to medically necessary care based on an AI model” with a 90% error rate.

The case is pending. In its defense, UnitedHealth has asserted that decisions on whether to approve or deny coverage remain entirely in the hands of physicians and other clinical professionals the company employs, and their decisions on coverage and care comply with Medicare standards.

The AI algorithm cited by the plaintiffs, UnitedHealth says, is not used “to deny care to members or to make adverse medical necessity coverage determinations,” but rather to help physicians and patients “anticipate and plan for future care needs.” The company didn’t address the plaintiffs’ assertion about the algorithm’s error rate.

“We shouldn’t be complacent about accepting errors” from AI tools, Topol told me. But it’s proper to wonder whether that message has been absorbed by promoters of AI health applications.

Disclaimers warning that AI responses “are not professionally vetted or a substitute for medical advice” have all but disappeared from AI platforms, according to a survey by researchers at Stanford and UC Berkeley.

The issue becomes more urgent as the language of chatbots becomes more sophisticated and fluent, inspiring unwarranted confidence in their conclusions, the researchers cautioned. “Users may misinterpret AI-generated content as expert guidance,” they wrote, “potentially resulting in delayed treatment, inappropriate self-care, or misplaced trust in non-validated information.”

Typically, state laws require that medical diagnoses and clinical decisions proceed from physical examinations by licensed doctors and after a full workup of a patient’s medical and family history. They don’t necessarily rule out doctors’ use of AI to help them develop diagnoses or treatment plans, but the doctors must remain in control.

The Food and Drug Administration exempts medical devices from government licensing if they’re “intended generally for patient education, and … not intended for use in the diagnosis of disease or other conditions. That may cover AI bots if they’re not issuing diagnoses.

But that may not help users who have willingly uploaded their medical histories and test results to AI bots, unaware of concerns, including whether their information will be kept private or used against them in insurance decisions. Gaps in their uploaded data my affect the advice they receive from bots. And because the bots know nothing except the content they’ve been fed, their healthcare outputs may reflect cultural biases in the basic data, such as ethnic disparities in disease incidence and treatment.

“If there’s a mistake with all your data, you could get into a pretty severe anxiety attack,” Topol says. “Patients should verify, not just trust” what they’ve heard from a bot.

Topol warns that the negative effect of misleading AI information may not only fall on patients, but on the AI field itself. “The public doesn’t really differentiate between individual bots,” he told me. “All we need are some horror stories” about misdiagnoses or dangerous advice, “and that whole area is tarred.”

In his view, that would limit the promise of technologies that could improve the effectiveness of medical practice in many ways. The remedy is for AI applications to be subjected to the same clinical standards applied to “a drug, a device, a diagnostic. We can’t lower the threshold because it’s something new, or different, with some broad appeal.”

-

World1 day ago

World1 day agoExclusive: DeepSeek withholds latest AI model from US chipmakers including Nvidia, sources say

-

Massachusetts2 days ago

Massachusetts2 days agoMother and daughter injured in Taunton house explosion

-

Montana1 week ago

Montana1 week ago2026 MHSA Montana Wrestling State Championship Brackets And Results – FloWrestling

-

Oklahoma1 week ago

Oklahoma1 week agoWildfires rage in Oklahoma as thousands urged to evacuate a small city

-

Louisiana4 days ago

Louisiana4 days agoWildfire near Gum Swamp Road in Livingston Parish now under control; more than 200 acres burned

-

Technology6 days ago

Technology6 days agoYouTube TV billing scam emails are hitting inboxes

-

Denver, CO2 days ago

Denver, CO2 days ago10 acres charred, 5 injured in Thornton grass fire, evacuation orders lifted

-

Technology6 days ago

Technology6 days agoStellantis is in a crisis of its own making